Russ Allbery: Review: A Study in Honor

| Series: | Janet Watson Chronicles #1 |

| Publisher: | Harper Voyager |

| Copyright: | July 2018 |

| ISBN: | 0-06-269932-6 |

| Format: | Kindle |

| Pages: | 295 |

| Series: | Janet Watson Chronicles #1 |

| Publisher: | Harper Voyager |

| Copyright: | July 2018 |

| ISBN: | 0-06-269932-6 |

| Format: | Kindle |

| Pages: | 295 |

This is a post I wrote in June 2022, but did not publish back then.

After first publishing it in December 2023, a perfectionist insecure

part of me unpublished it again. After receiving positive feedback, i

slightly amended and republish it now.

In this post, I talk about unpaid work in F/LOSS, taking on the example

of hackathons, and why, in my opinion, the expectation of volunteer work

is hurting diversity.

Disclaimer: I don t have all the answers, only some ideas and questions.

This is a post I wrote in June 2022, but did not publish back then.

After first publishing it in December 2023, a perfectionist insecure

part of me unpublished it again. After receiving positive feedback, i

slightly amended and republish it now.

In this post, I talk about unpaid work in F/LOSS, taking on the example

of hackathons, and why, in my opinion, the expectation of volunteer work

is hurting diversity.

Disclaimer: I don t have all the answers, only some ideas and questions.

Indeed, while we have proven that there is a strong and significative correlation between the income and the participation in a free/libre software project, it is not possible for us to pronounce ourselves about the causality of this link.In the French original text:

En effet, si nous avons prouv qu il existe une corr lation forte et significative entre le salaire et la participation un projet libre, il ne nous est pas possible de nous prononcer sur la causalit de ce lien.Said differently, it is certain that there is a relationship between income and F/LOSS contribution, but it s unclear whether working on free/libre software ultimately helps finding a well paid job, or if having a well paid job is the cause enabling work on free/libre software. I would like to scratch this question a bit further, mostly relying on my own observations, experiences, and discussions with F/LOSS contributors.

It is unclear whether working on free/libre software ultimately helps finding a well paid job, or if having a well paid job is the cause enabling work on free/libre software.Maybe we need to imagine this cause-effect relationship over time: as a student, without children and lots of free time, hopefully some money from the state or the family, people can spend time on F/LOSS, collect experience, earn recognition - and later find a well-paid job and make unpaid F/LOSS contributions into a hobby, cementing their status in the community, while at the same time generating a sense of well-being from working on the common good. This is a quite common scenario. As the Flosspols study revealed however, boys often get their own computer at the age of 14, while girls get one only at the age of 20. (These numbers might be slightly different now, and possibly many people don t own an actual laptop or desktop computer anymore, instead they own mobile devices which are not exactly inciting them to look behind the surface, take apart, learn, appropriate technology.) In any case, the above scenario does not allow for people who join F/LOSS later in life, eg. changing careers, to find their place. I believe that F/LOSS projects cannot expect to have more women, people of color, people from working class backgrounds, people from outside of Germany, France, USA, UK, Australia, and Canada on board as long as volunteer work is the status quo and waged labour an earned privilege.

START_CHARGE_THRESH_BAT0 and

STOP_CHARGE_THERSH_BAT0 in /etc/tlp.conf.

I recently installed OpenBSD on my work ThinkPad, but struggled to

find any information on how to set the thresholds under OpenBSD. After

only finding a dead-end thread from 2021 on misc@, I started

digging around on how to implement it myself. The acpithinkpad and

acpibat drivers looked promising, and a bit of Google-fu lead me to

the following small announcement in the OpenBSD 7.4 release

notes:

New sysctl(2) nodes for battery management, hw.battery.charge*. Support them with acpithinkpad(4) and aplsmc(4).Lo and behold, setting the start and stop threshold in OpenBSD is simply a matter of setting

hw.battery.chargestart and

hw.battery.chargestop with sysctl. The documentation was not

committed in time for the 7.4 release, but you can read it in

-CURRENT s sysctl(2). I personally set the following values

in /etc/sysctl.conf:

hw.battery.chargestart=40

hw.battery.chargestop=60

Intro: Hi, I m Melissa from Igalia and welcome to the Rainbow Treasure Map, a

talk about advanced color management on Linux with AMD/SteamDeck.

Intro: Hi, I m Melissa from Igalia and welcome to the Rainbow Treasure Map, a

talk about advanced color management on Linux with AMD/SteamDeck.

Useful links: First of all, if you are not used to the topic, you may find

these links useful.

Useful links: First of all, if you are not used to the topic, you may find

these links useful.

Context: When we talk about colors in the graphics chain, we should keep in

mind that we have a wide variety of source content colorimetry, a variety of

output display devices and also the internal processing. Users expect

consistent color reproduction across all these devices.

The userspace can use GPU-accelerated color management to get it. But this also

requires an interface with display kernel drivers that is currently missing

from the DRM/KMS framework.

Context: When we talk about colors in the graphics chain, we should keep in

mind that we have a wide variety of source content colorimetry, a variety of

output display devices and also the internal processing. Users expect

consistent color reproduction across all these devices.

The userspace can use GPU-accelerated color management to get it. But this also

requires an interface with display kernel drivers that is currently missing

from the DRM/KMS framework.

Since April, I ve been bothering the DRM community by sending patchsets from

the work of me and Joshua to add driver-specific color properties to the AMD

display driver. In parallel, discussions on defining a generic color management

interface are still ongoing in the community. Moreover, we are still not clear

about the diversity of color capabilities among hardware vendors.

To bridge this gap, we defined a color pipeline for Gamescope that fits the

latest versions of AMD hardware. It delivers advanced color management features

for gamut mapping, HDR rendering, SDR on HDR, and HDR on SDR.

Since April, I ve been bothering the DRM community by sending patchsets from

the work of me and Joshua to add driver-specific color properties to the AMD

display driver. In parallel, discussions on defining a generic color management

interface are still ongoing in the community. Moreover, we are still not clear

about the diversity of color capabilities among hardware vendors.

To bridge this gap, we defined a color pipeline for Gamescope that fits the

latest versions of AMD hardware. It delivers advanced color management features

for gamut mapping, HDR rendering, SDR on HDR, and HDR on SDR.

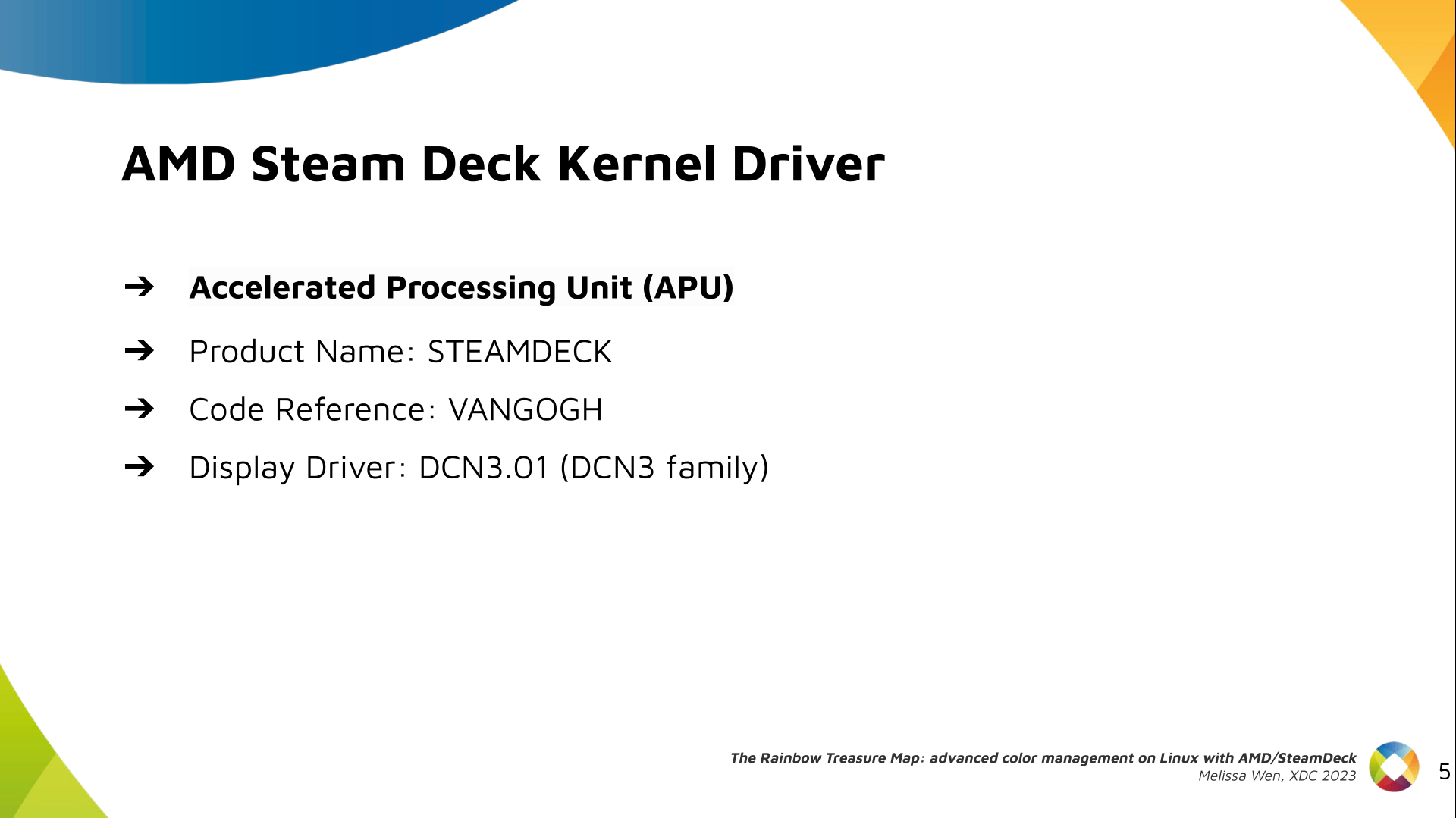

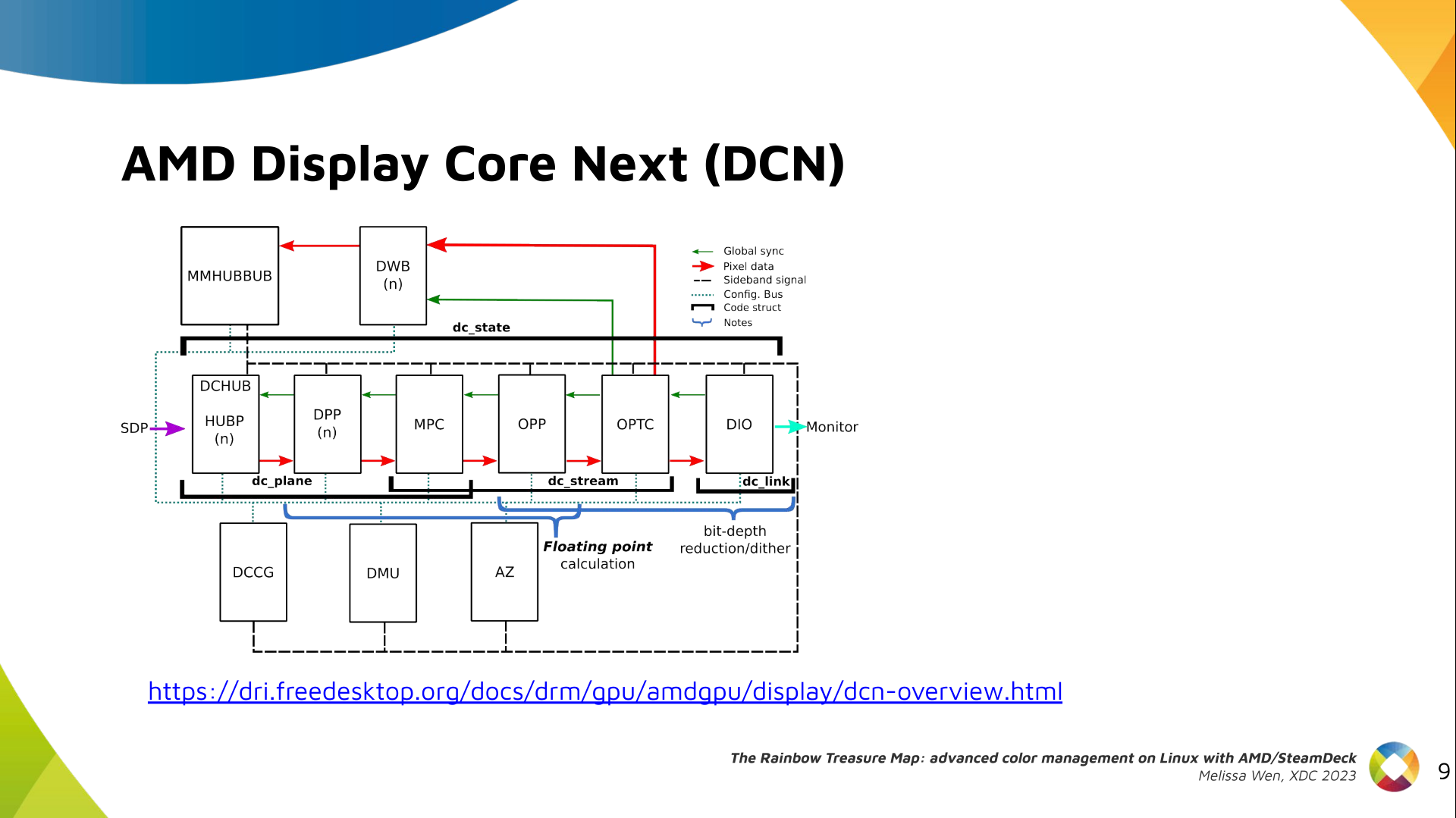

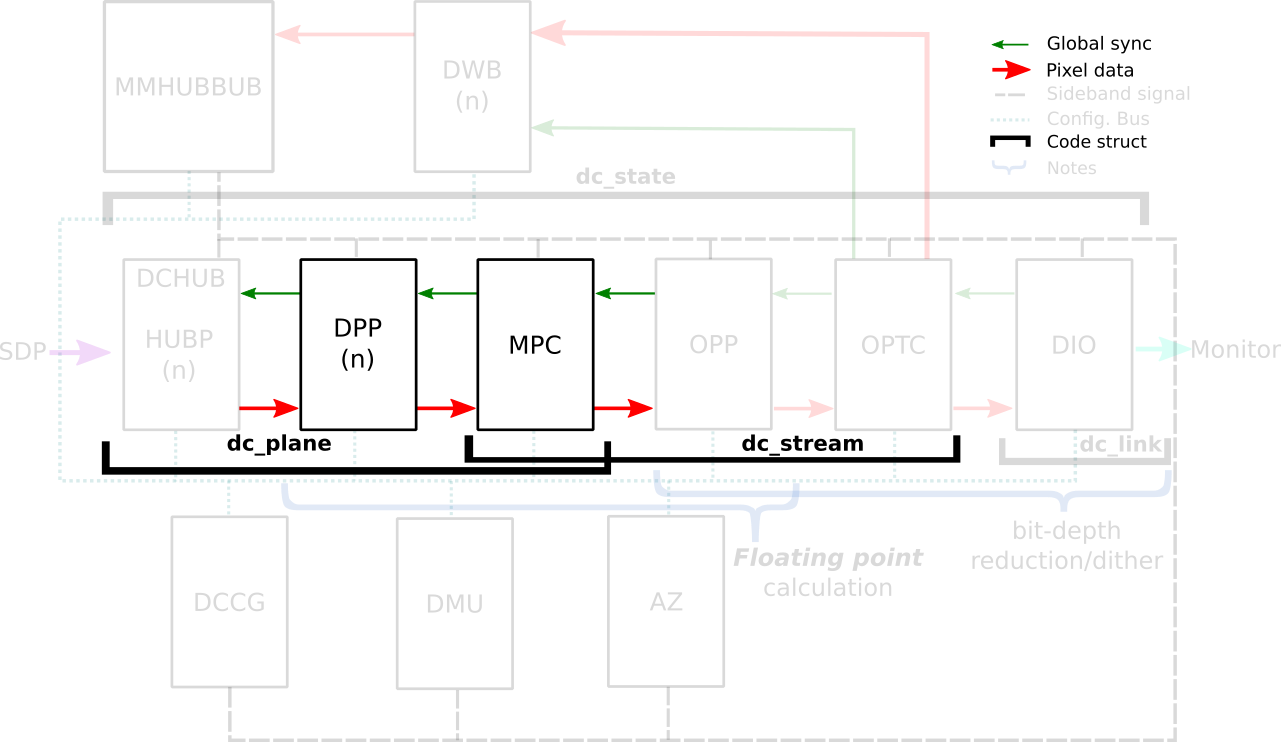

AMD/Steam Deck hardware: AMD frequently releases new GPU and APU generations.

Each generation comes with a DCN version with display hardware improvements.

Therefore, keep in mind that this work uses the AMD Steam Deck hardware and its

kernel driver. The Steam Deck is an APU with a DCN3.01 display driver, a DCN3

family.

It s important to have this information since newer AMD DCN drivers inherit

implementations from previous families but aldo each generation of AMD hardware

may introduce new color capabilities. Therefore I recommend you to familiarize

yourself with the hardware you are working on.

AMD/Steam Deck hardware: AMD frequently releases new GPU and APU generations.

Each generation comes with a DCN version with display hardware improvements.

Therefore, keep in mind that this work uses the AMD Steam Deck hardware and its

kernel driver. The Steam Deck is an APU with a DCN3.01 display driver, a DCN3

family.

It s important to have this information since newer AMD DCN drivers inherit

implementations from previous families but aldo each generation of AMD hardware

may introduce new color capabilities. Therefore I recommend you to familiarize

yourself with the hardware you are working on.

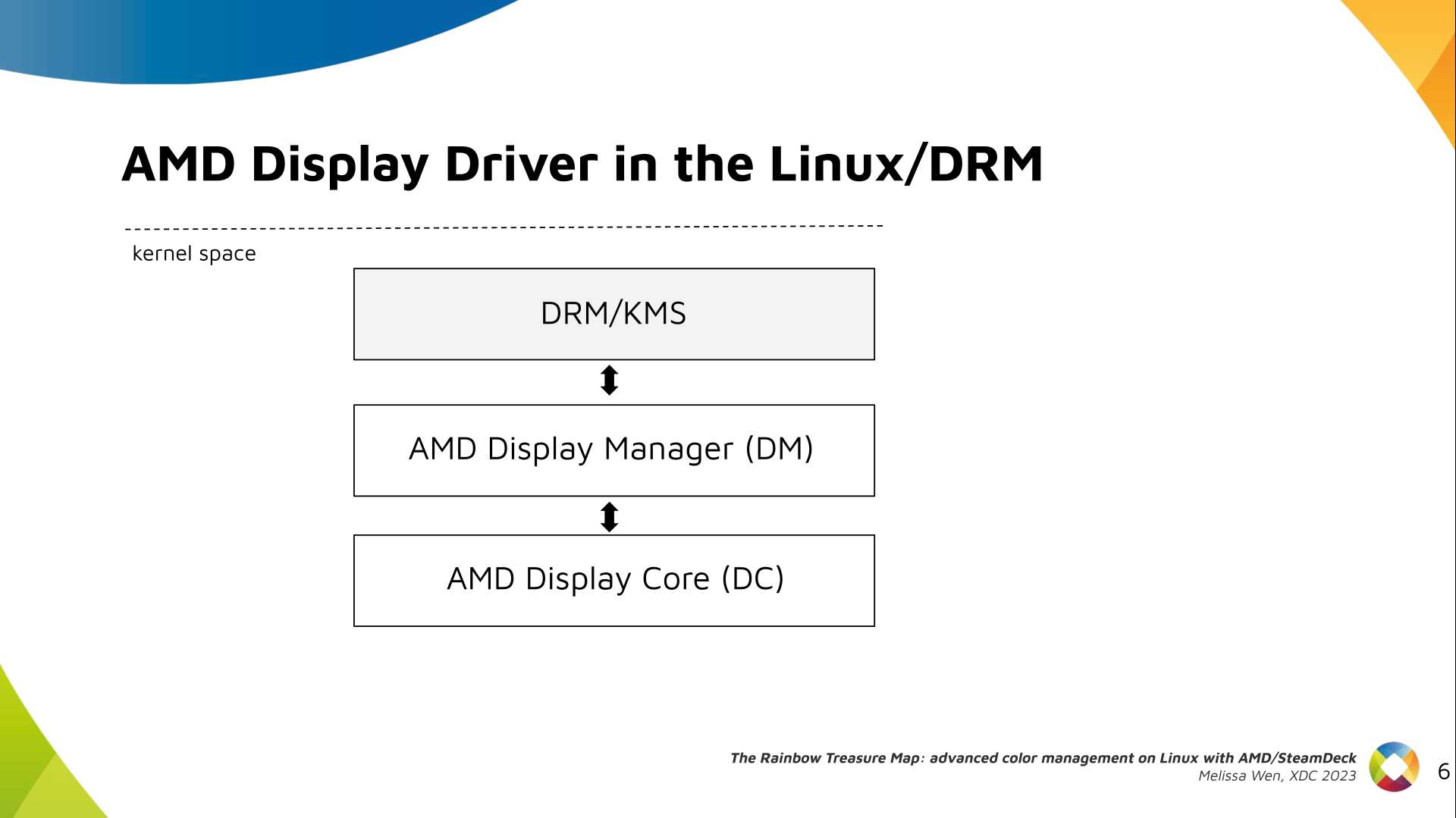

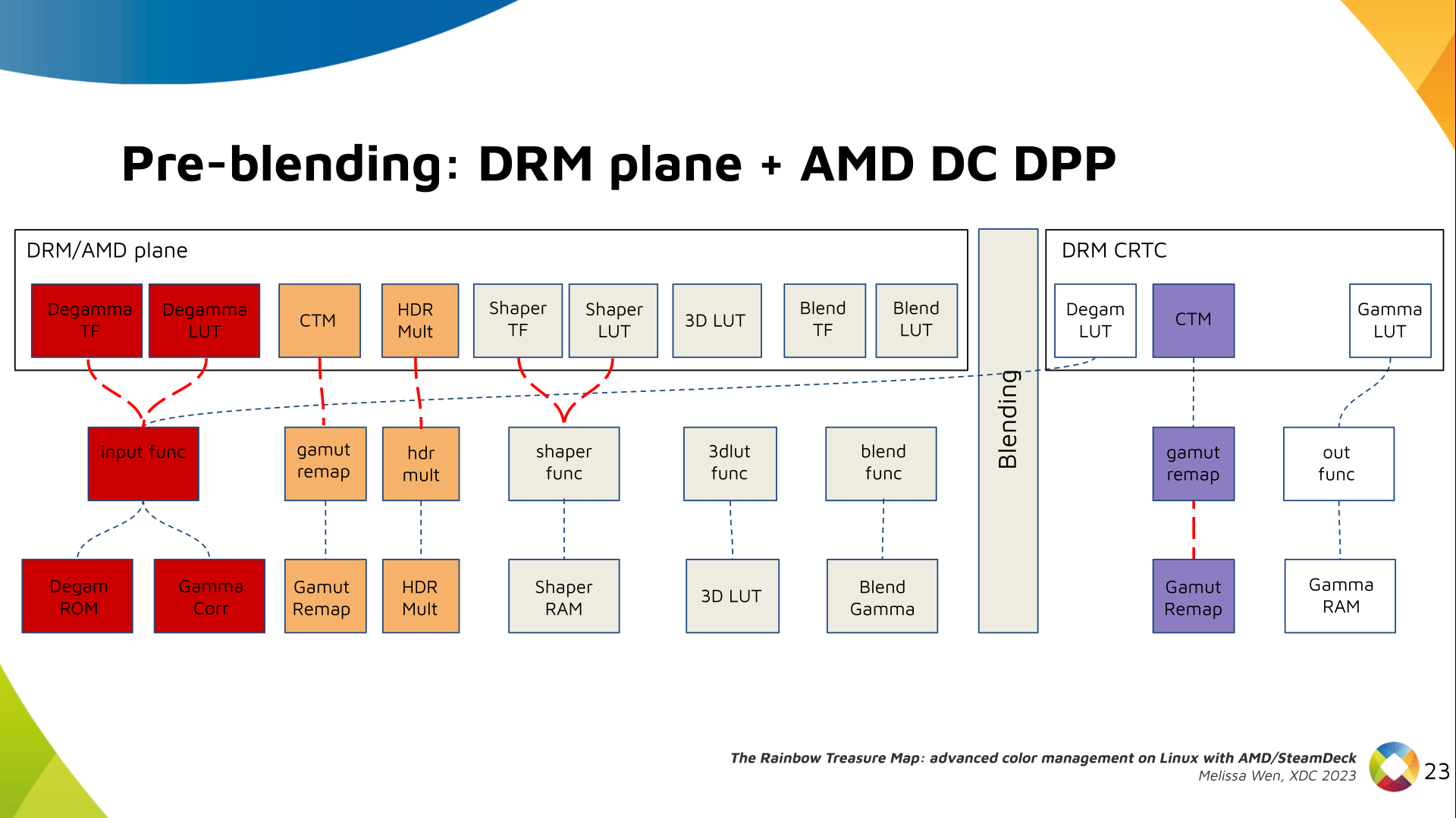

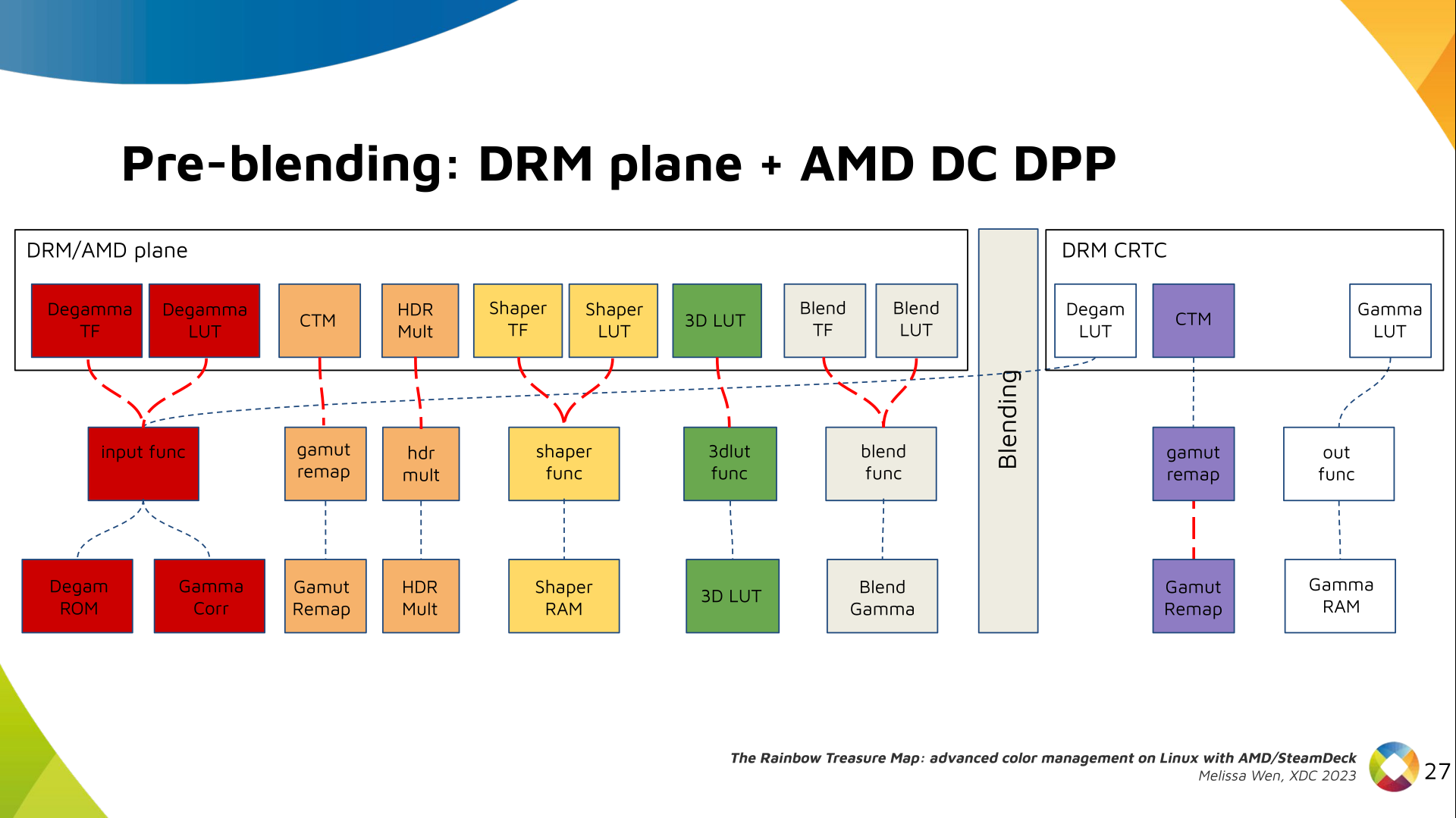

The AMD display driver in the kernel space: It consists of three layers, (1)

the DRM/KMS framework, (2) the AMD Display Manager, and (3) the AMD Display

Core. We extended the color interface exposed to userspace by leveraging

existing DRM resources and connecting them using driver-specific functions for

color property management.

The AMD display driver in the kernel space: It consists of three layers, (1)

the DRM/KMS framework, (2) the AMD Display Manager, and (3) the AMD Display

Core. We extended the color interface exposed to userspace by leveraging

existing DRM resources and connecting them using driver-specific functions for

color property management.

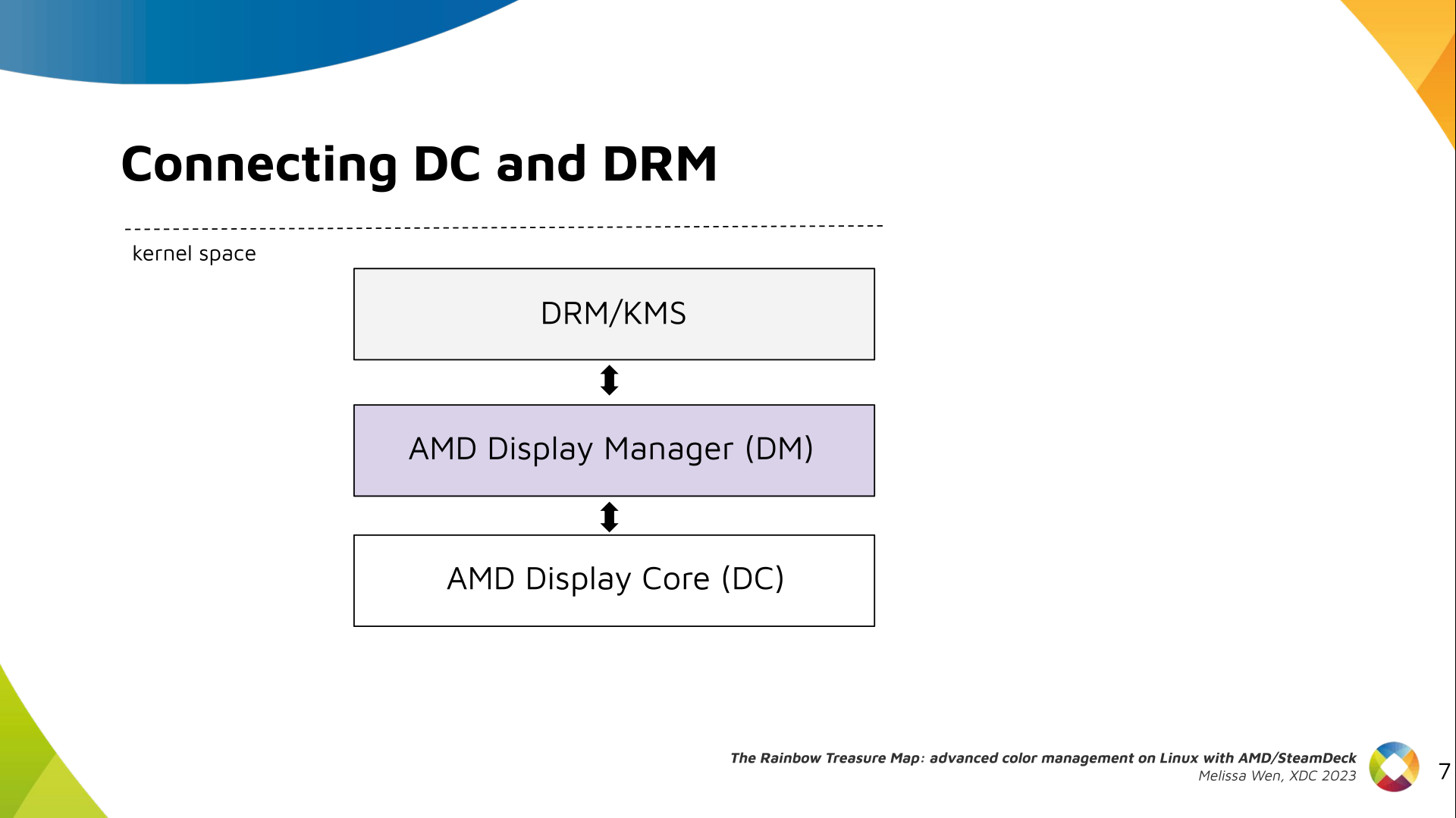

Bridging DC color capabilities and the DRM API required significant changes in

the color management of AMD Display Manager - the Linux-dependent part that

connects the AMD DC interface to the DRM/KMS framework.

Bridging DC color capabilities and the DRM API required significant changes in

the color management of AMD Display Manager - the Linux-dependent part that

connects the AMD DC interface to the DRM/KMS framework.

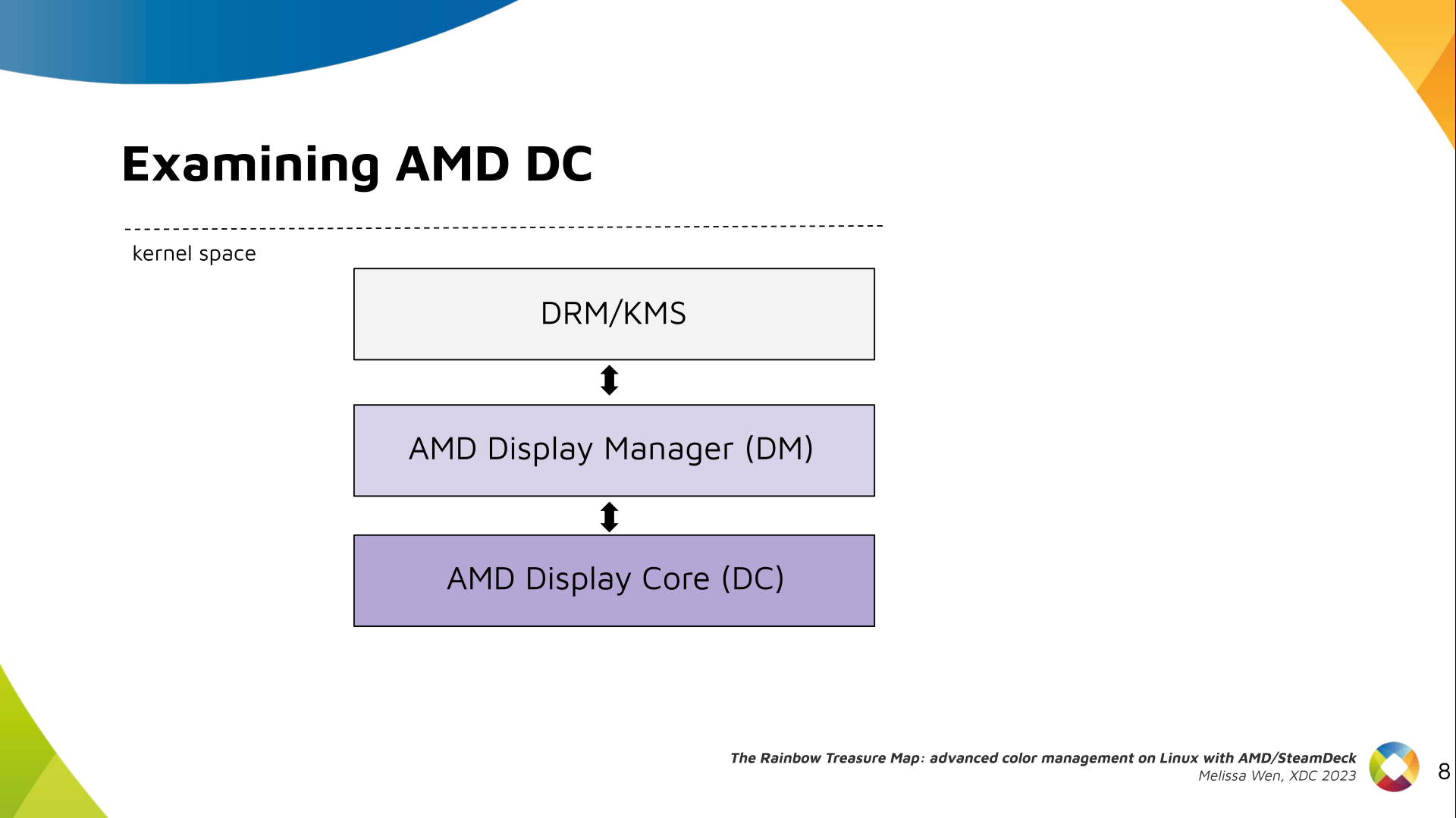

The AMD DC is the OS-agnostic layer. Its code is shared between platforms and

DCN versions. Examining this part helps us understand the AMD color pipeline

and hardware capabilities, since the machinery for hardware settings and

resource management are already there.

The AMD DC is the OS-agnostic layer. Its code is shared between platforms and

DCN versions. Examining this part helps us understand the AMD color pipeline

and hardware capabilities, since the machinery for hardware settings and

resource management are already there.

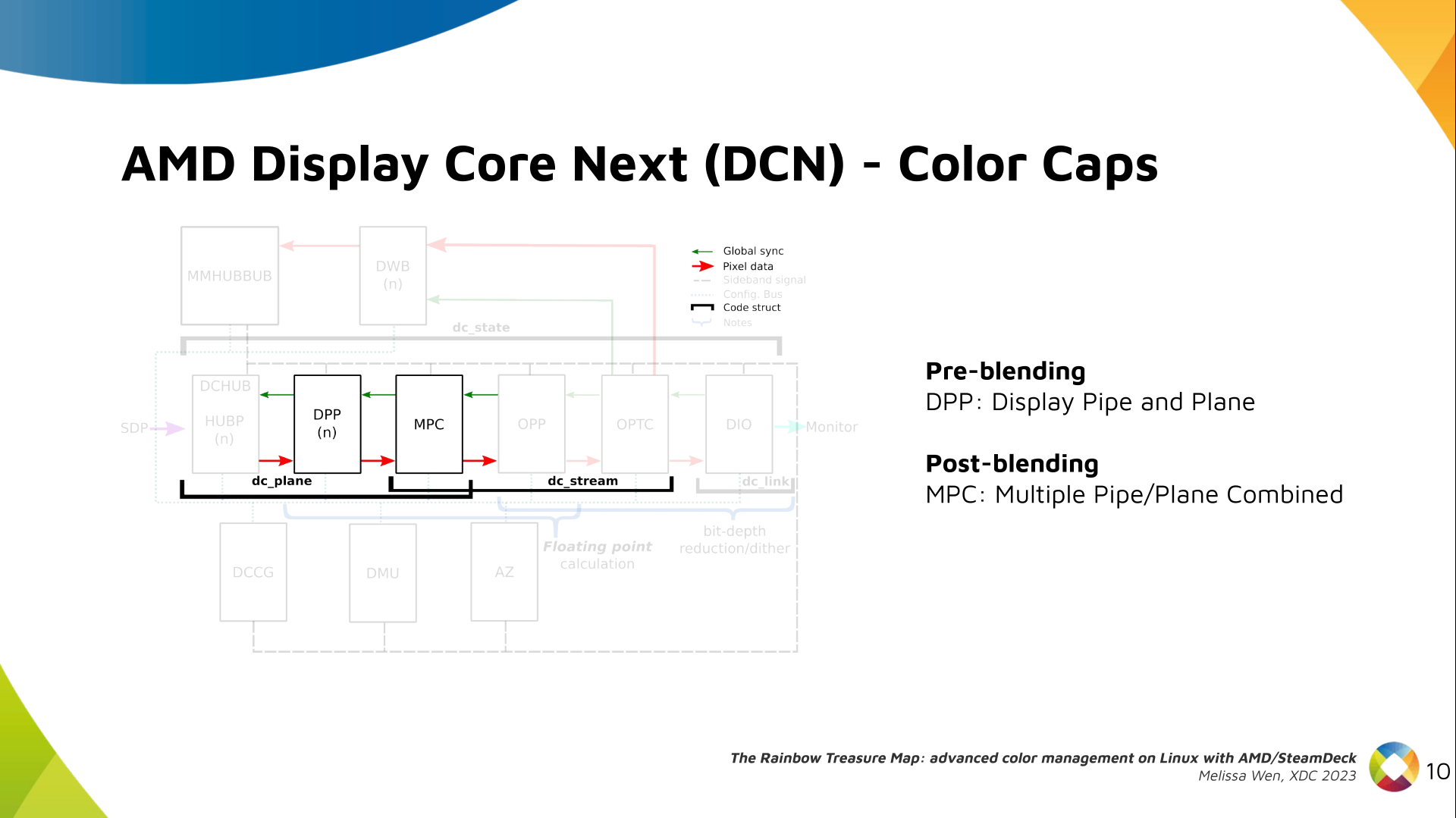

The newest architecture for AMD display hardware is the AMD Display Core Next.

The newest architecture for AMD display hardware is the AMD Display Core Next.

In this architecture, two blocks have the capability to manage colors:

In this architecture, two blocks have the capability to manage colors:

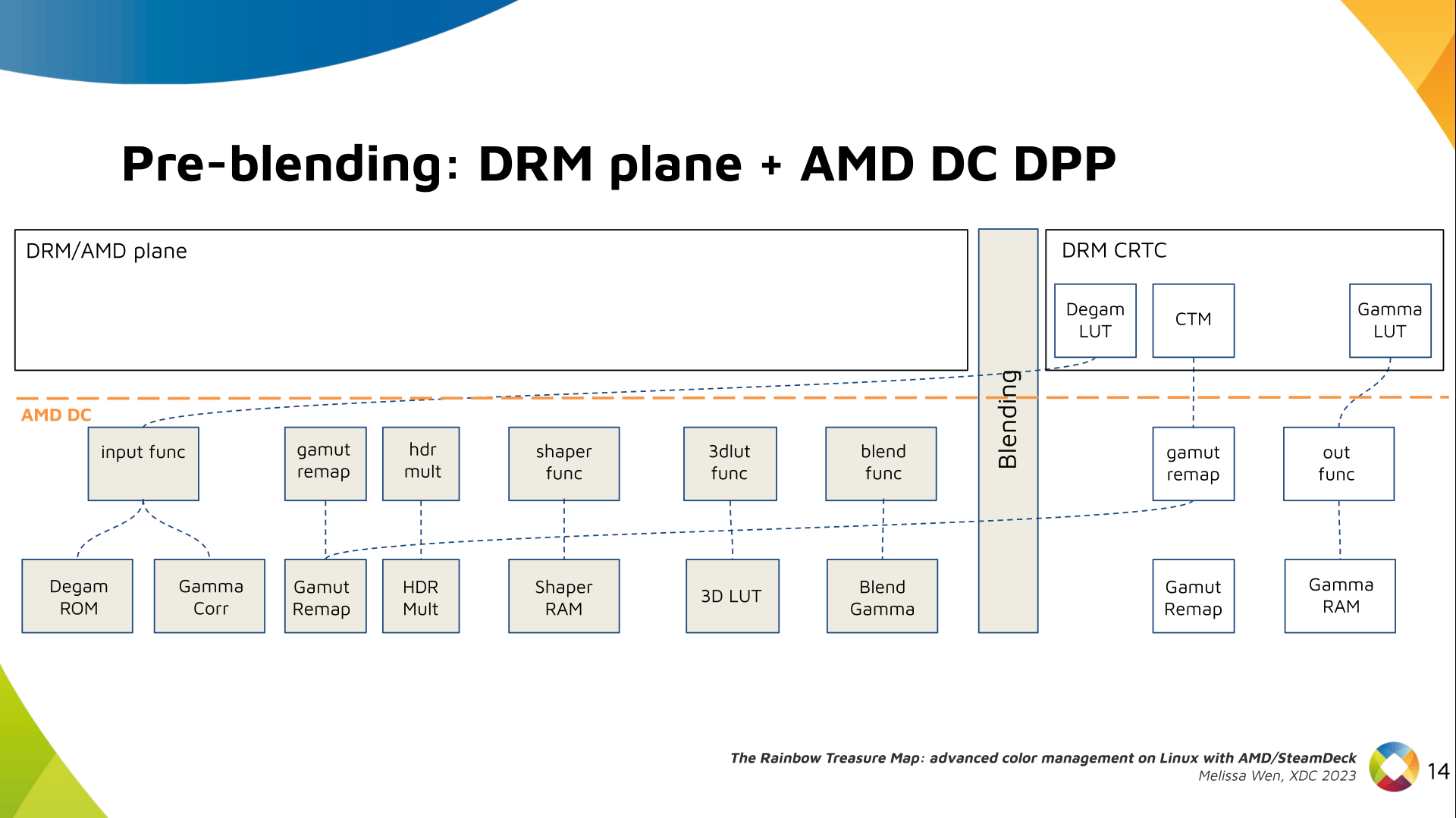

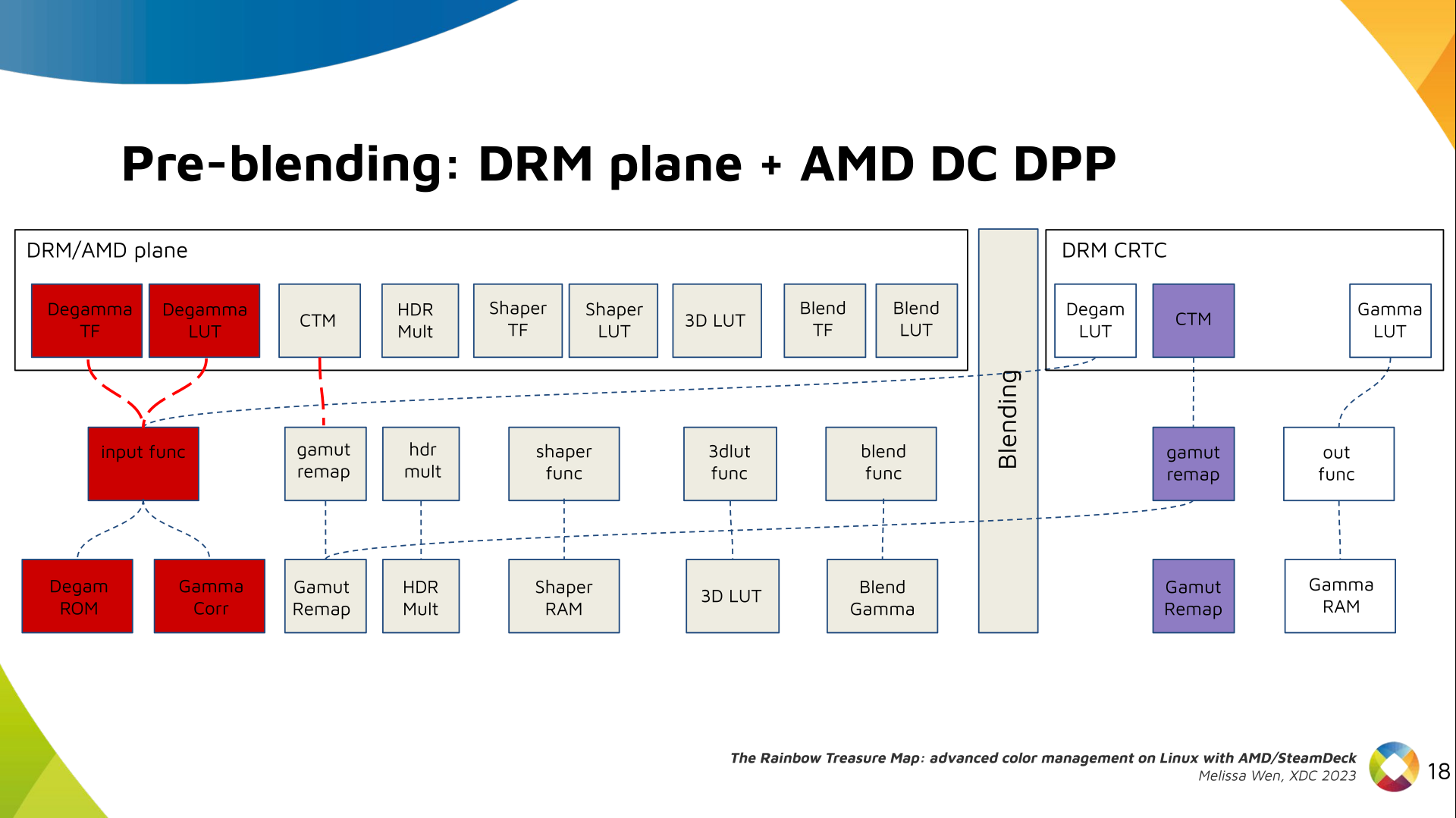

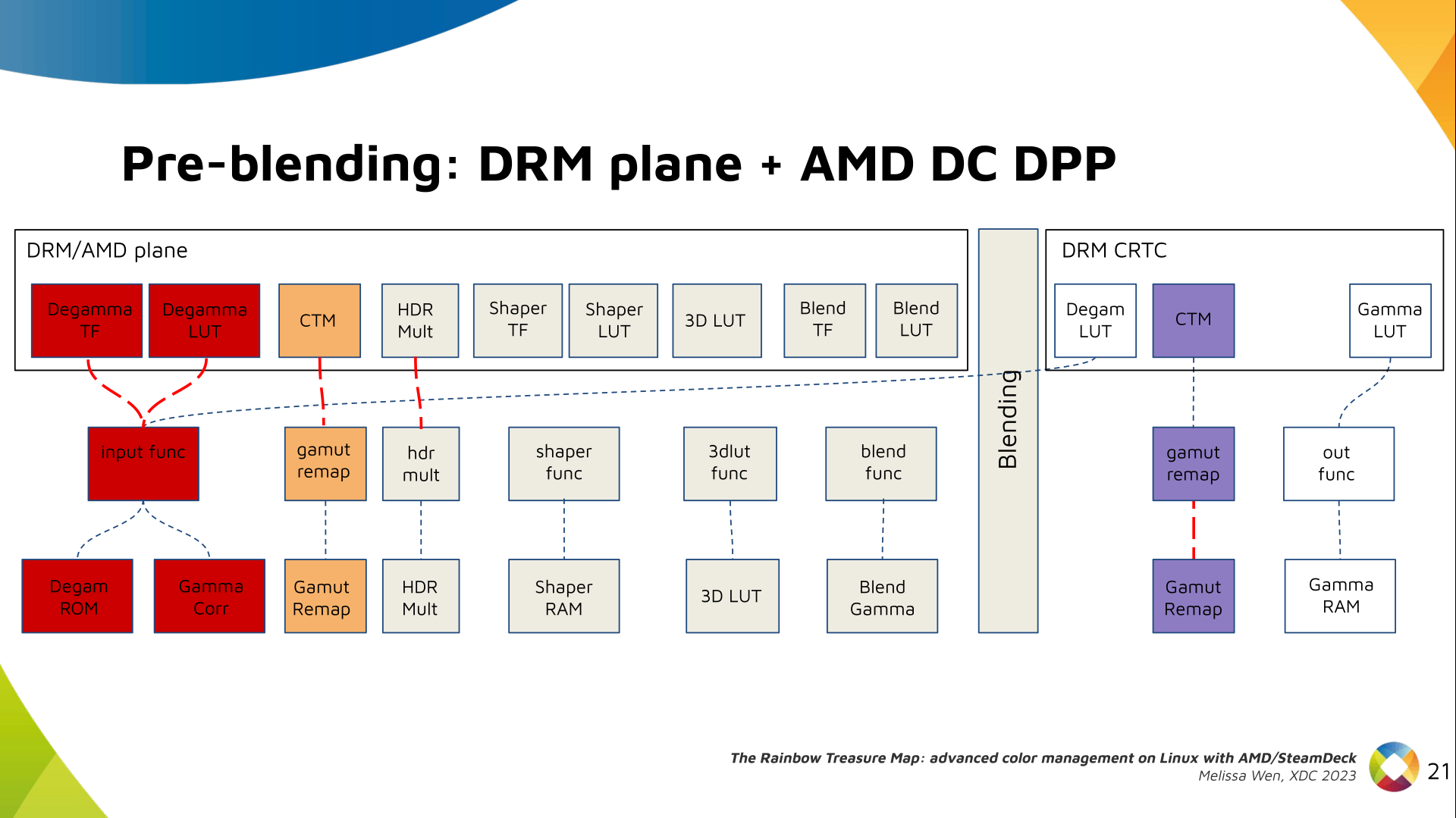

DRM plane color properties:

This is the DRM color management API before blending.

Nothing!

Except two basic DRM plane properties:

DRM plane color properties:

This is the DRM color management API before blending.

Nothing!

Except two basic DRM plane properties: color_encoding and color_range for

the input colorspace conversion, that is not covered by this work.

In case you re not familiar with AMD shared code, what we need to do is

basically draw a map and navigate there!

We have some DRM color properties after blending, but nothing before blending

yet. But much of the hardware programming was already implemented in the AMD DC

layer, thanks to the shared code.

In case you re not familiar with AMD shared code, what we need to do is

basically draw a map and navigate there!

We have some DRM color properties after blending, but nothing before blending

yet. But much of the hardware programming was already implemented in the AMD DC

layer, thanks to the shared code.

Still both the DRM interface and its connection to the shared code were

missing. That s when the search begins!

Still both the DRM interface and its connection to the shared code were

missing. That s when the search begins!

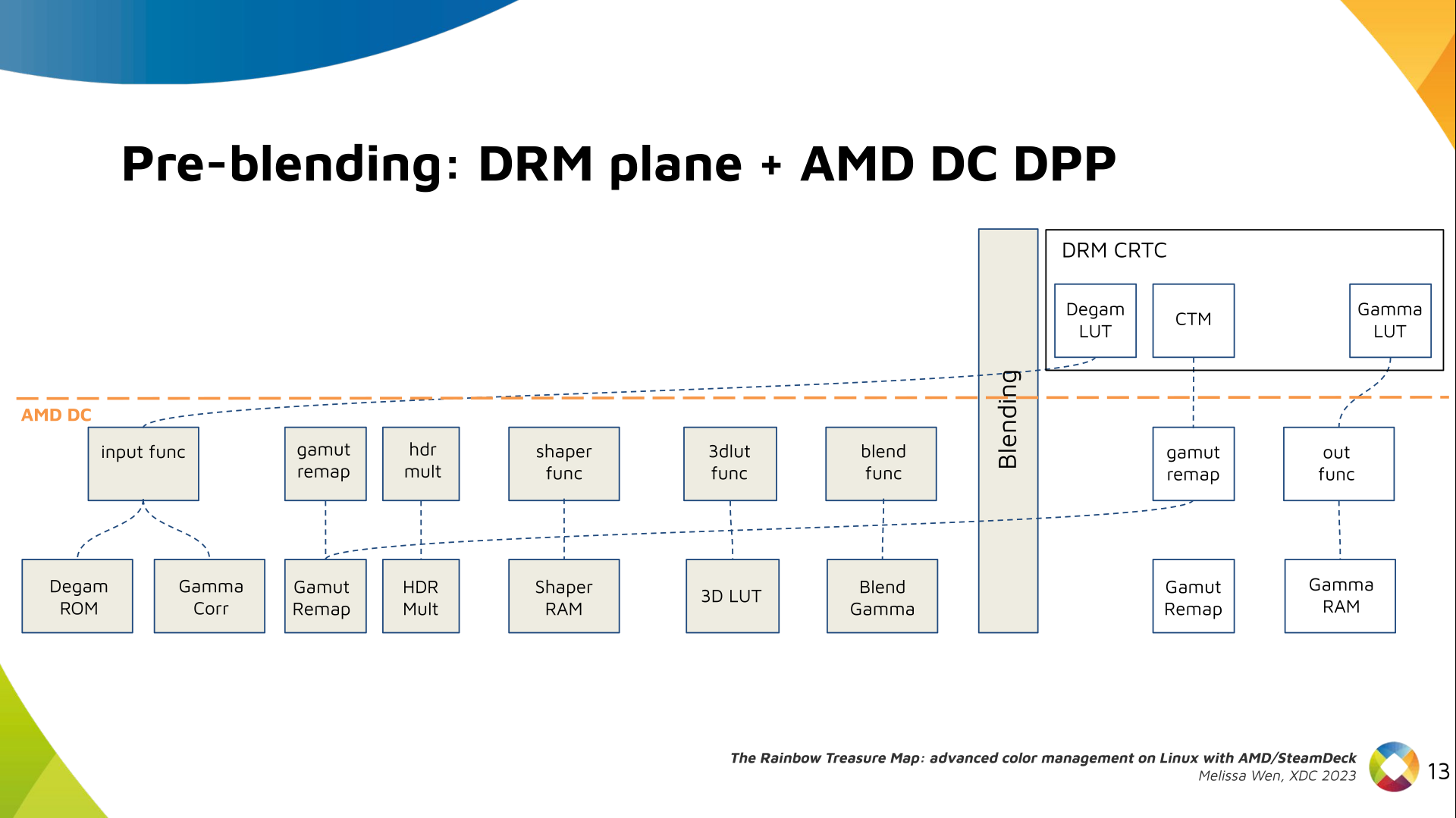

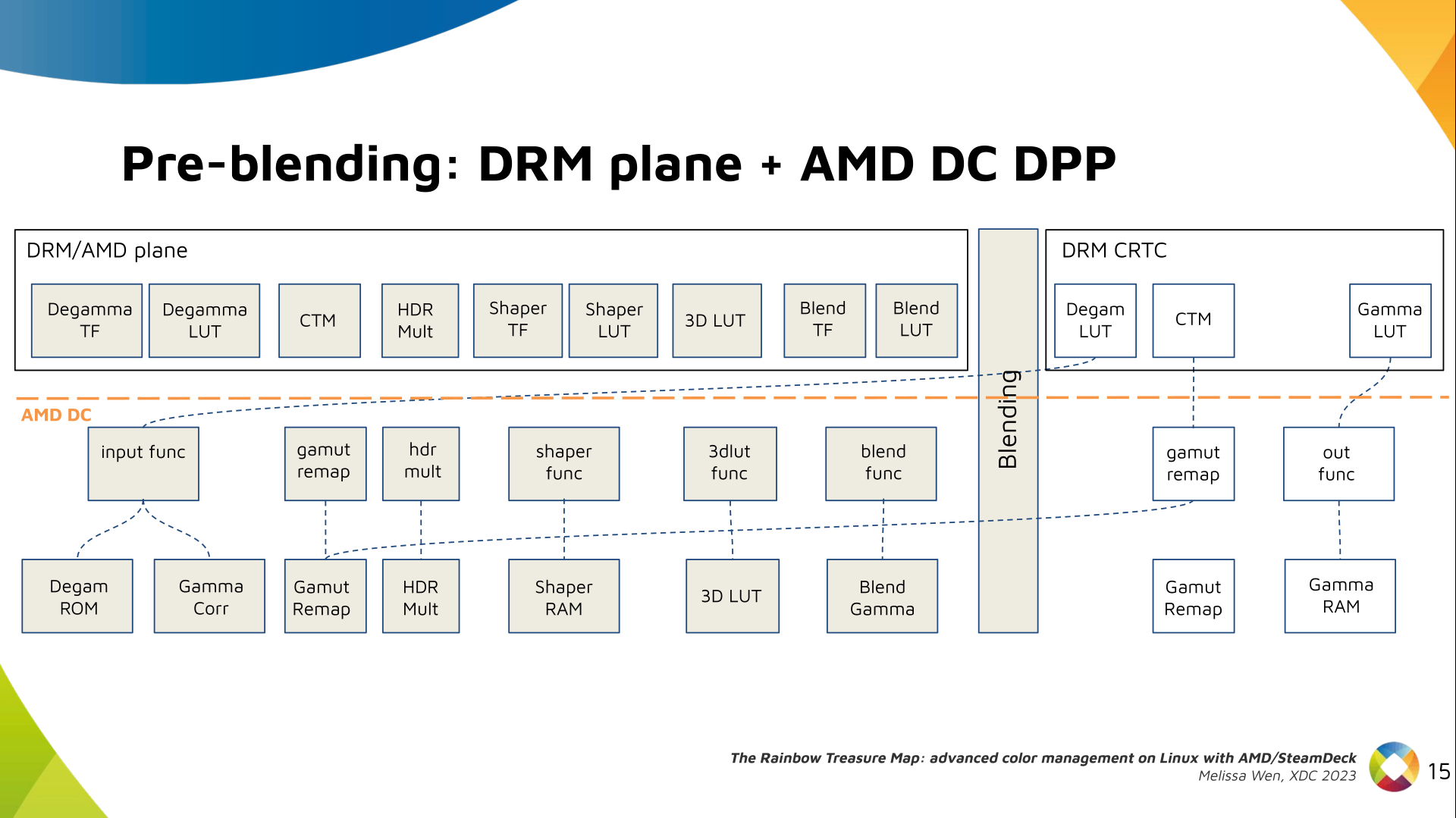

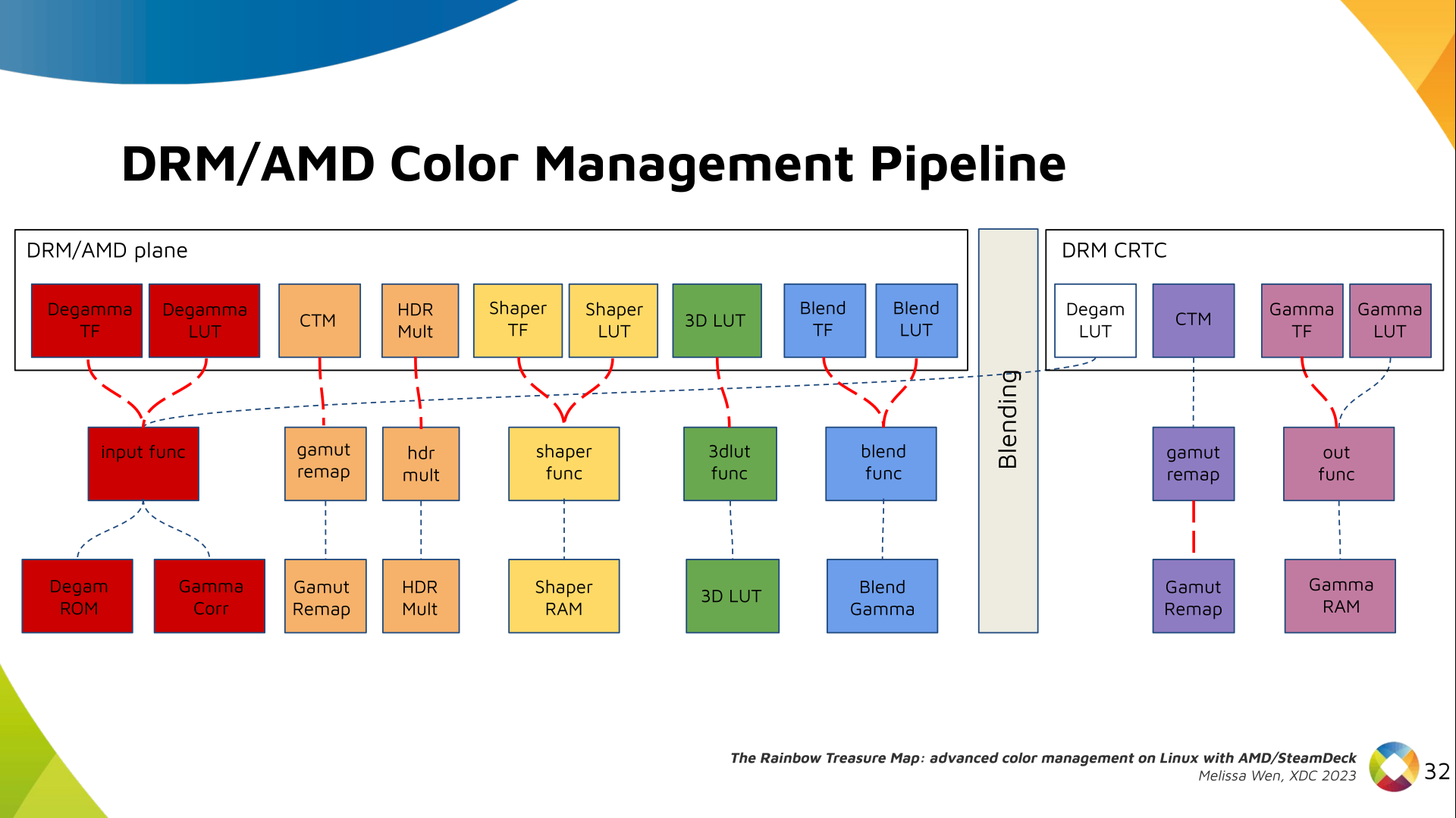

AMD driver-specific color pipeline:

Looking at the color capabilities of the hardware, we arrive at this initial

set of properties. The path wasn t exactly like that. We had many iterations

and discoveries until reached to this pipeline.

AMD driver-specific color pipeline:

Looking at the color capabilities of the hardware, we arrive at this initial

set of properties. The path wasn t exactly like that. We had many iterations

and discoveries until reached to this pipeline.

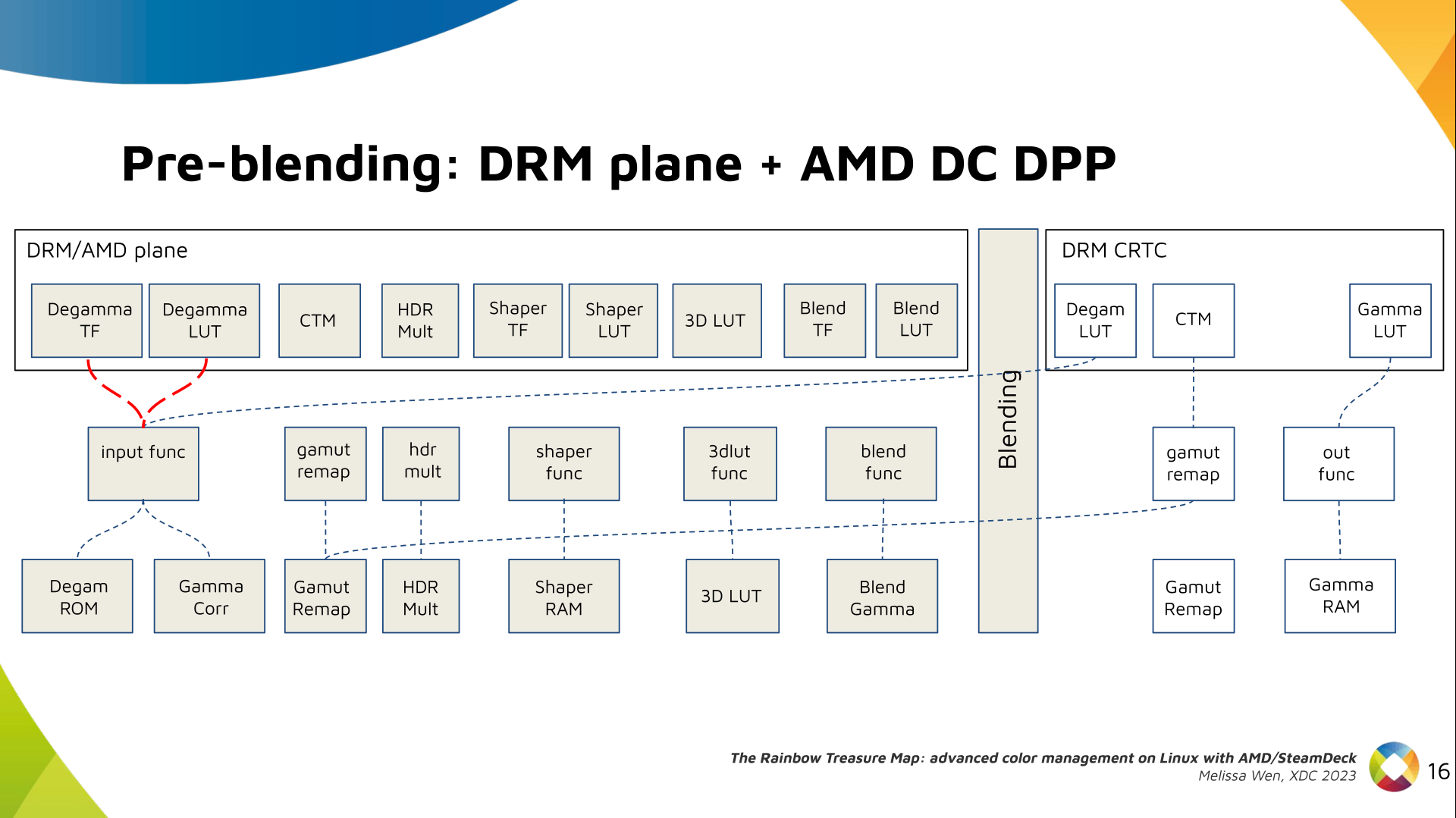

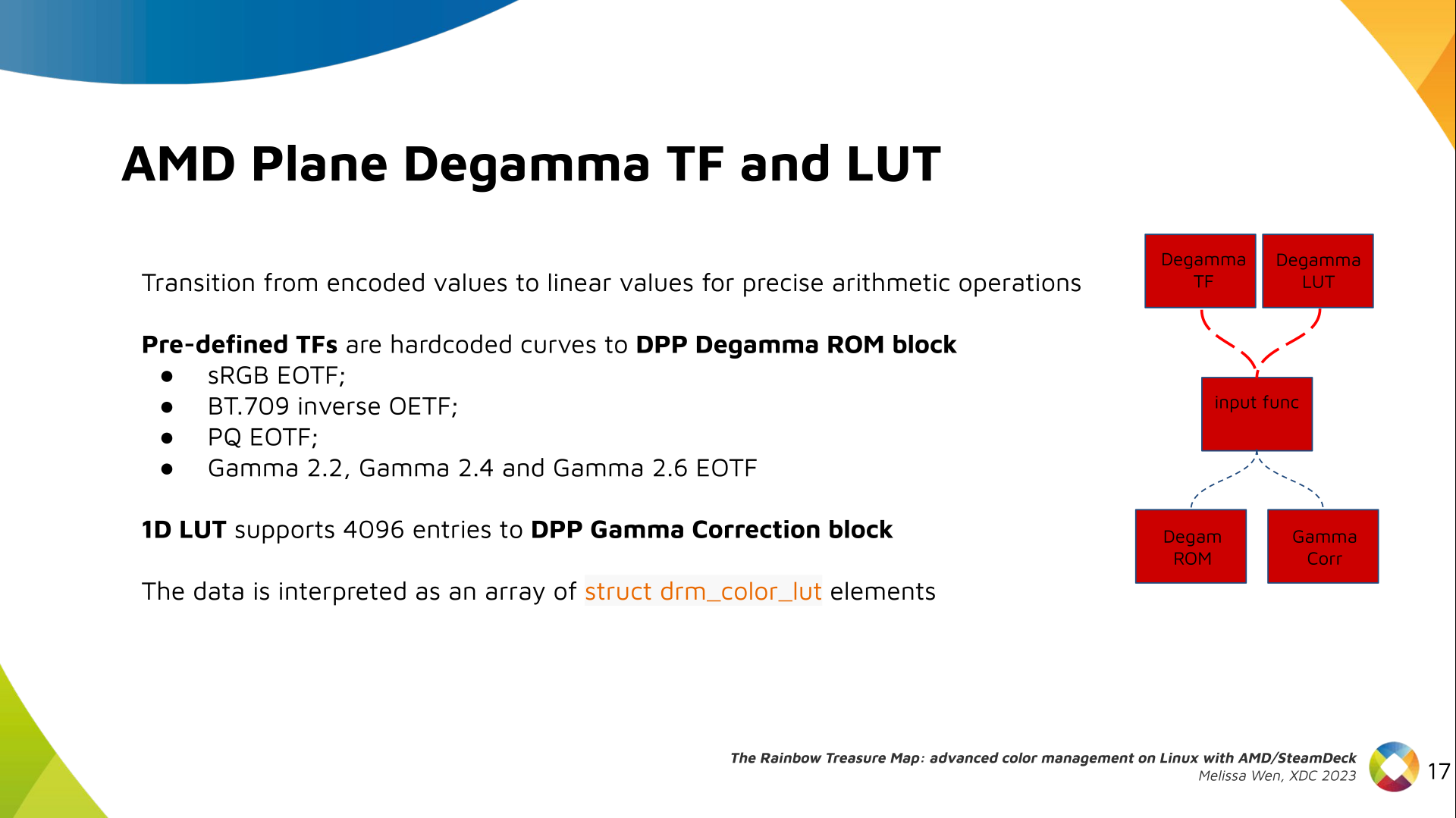

The Plane Degamma is our first driver-specific property before blending. It s

used to linearize the color space from encoded values to light linear values.

The Plane Degamma is our first driver-specific property before blending. It s

used to linearize the color space from encoded values to light linear values.

We can use a pre-defined transfer function or a user lookup table (in short,

LUT) to linearize the color space.

Pre-defined transfer functions for plane degamma are hardcoded curves that go

to a specific hardware block called DPP Degamma ROM. It supports the following

transfer functions: sRGB EOTF, BT.709 inverse OETF, PQ EOTF, and pure power

curves Gamma 2.2, Gamma 2.4 and Gamma 2.6.

We also have a one-dimensional LUT. This 1D LUT has four thousand ninety six

(4096) entries, the usual 1D LUT size in the DRM/KMS. It s an array of

We can use a pre-defined transfer function or a user lookup table (in short,

LUT) to linearize the color space.

Pre-defined transfer functions for plane degamma are hardcoded curves that go

to a specific hardware block called DPP Degamma ROM. It supports the following

transfer functions: sRGB EOTF, BT.709 inverse OETF, PQ EOTF, and pure power

curves Gamma 2.2, Gamma 2.4 and Gamma 2.6.

We also have a one-dimensional LUT. This 1D LUT has four thousand ninety six

(4096) entries, the usual 1D LUT size in the DRM/KMS. It s an array of

drm_color_lut that goes to the DPP Gamma Correction block.

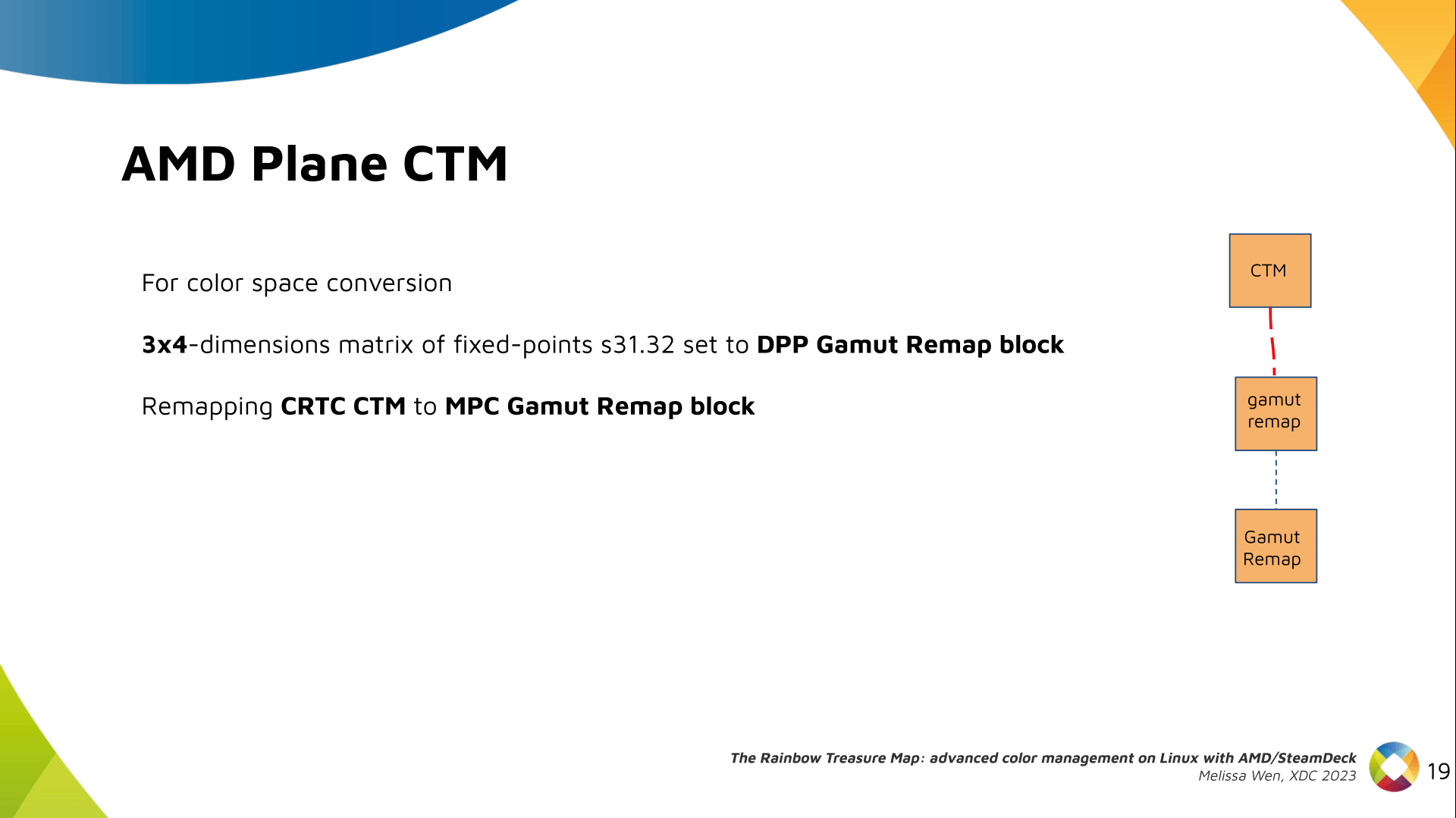

We also have now a color transformation matrix (CTM) for color space

conversion.

We also have now a color transformation matrix (CTM) for color space

conversion.

It s a 3x4 matrix of fixed points that goes to the DPP Gamut Remap Block.

Both pre- and post-blending matrices were previously gone to the same color

block. We worked on detaching them to clear both paths.

Now each CTM goes on its own way.

It s a 3x4 matrix of fixed points that goes to the DPP Gamut Remap Block.

Both pre- and post-blending matrices were previously gone to the same color

block. We worked on detaching them to clear both paths.

Now each CTM goes on its own way.

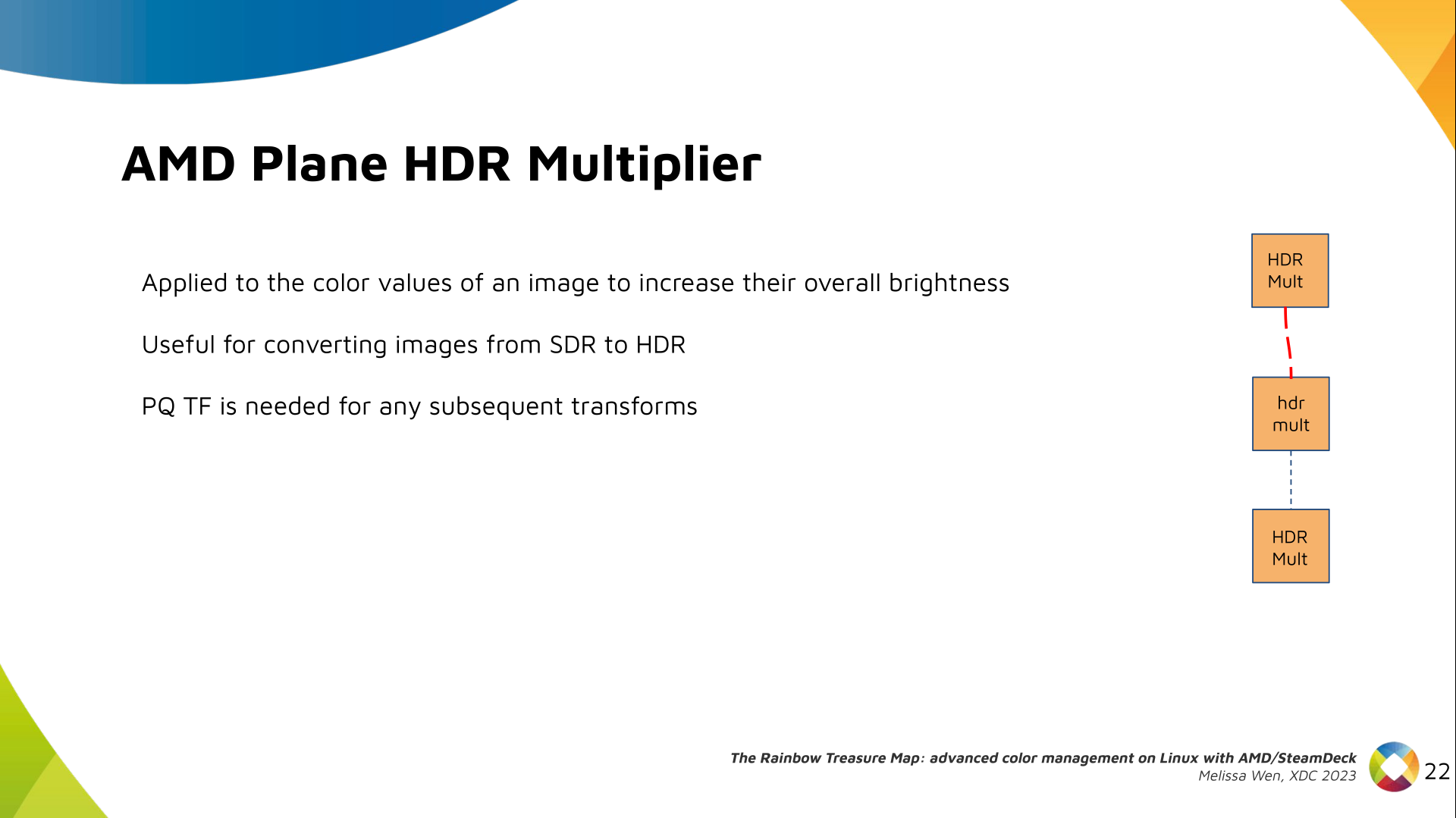

Next, the HDR Multiplier. HDR Multiplier is a factor applied to the color

values of an image to increase their overall brightness.

Next, the HDR Multiplier. HDR Multiplier is a factor applied to the color

values of an image to increase their overall brightness.

This is useful for converting images from a standard dynamic range (SDR) to a

high dynamic range (HDR). As it can range beyond [0.0, 1.0] subsequent

transforms need to use the PQ(HDR) transfer functions.

This is useful for converting images from a standard dynamic range (SDR) to a

high dynamic range (HDR). As it can range beyond [0.0, 1.0] subsequent

transforms need to use the PQ(HDR) transfer functions.

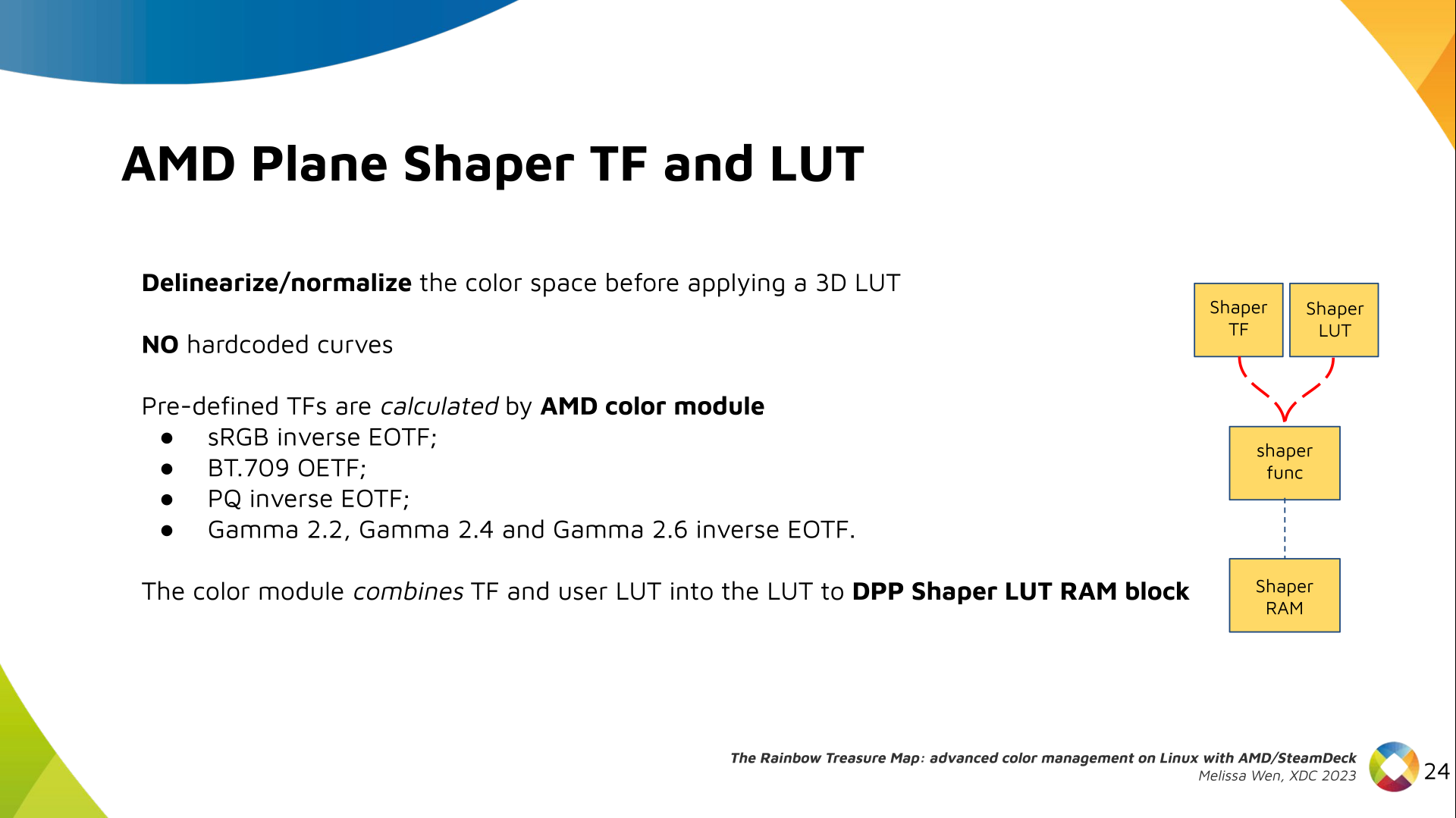

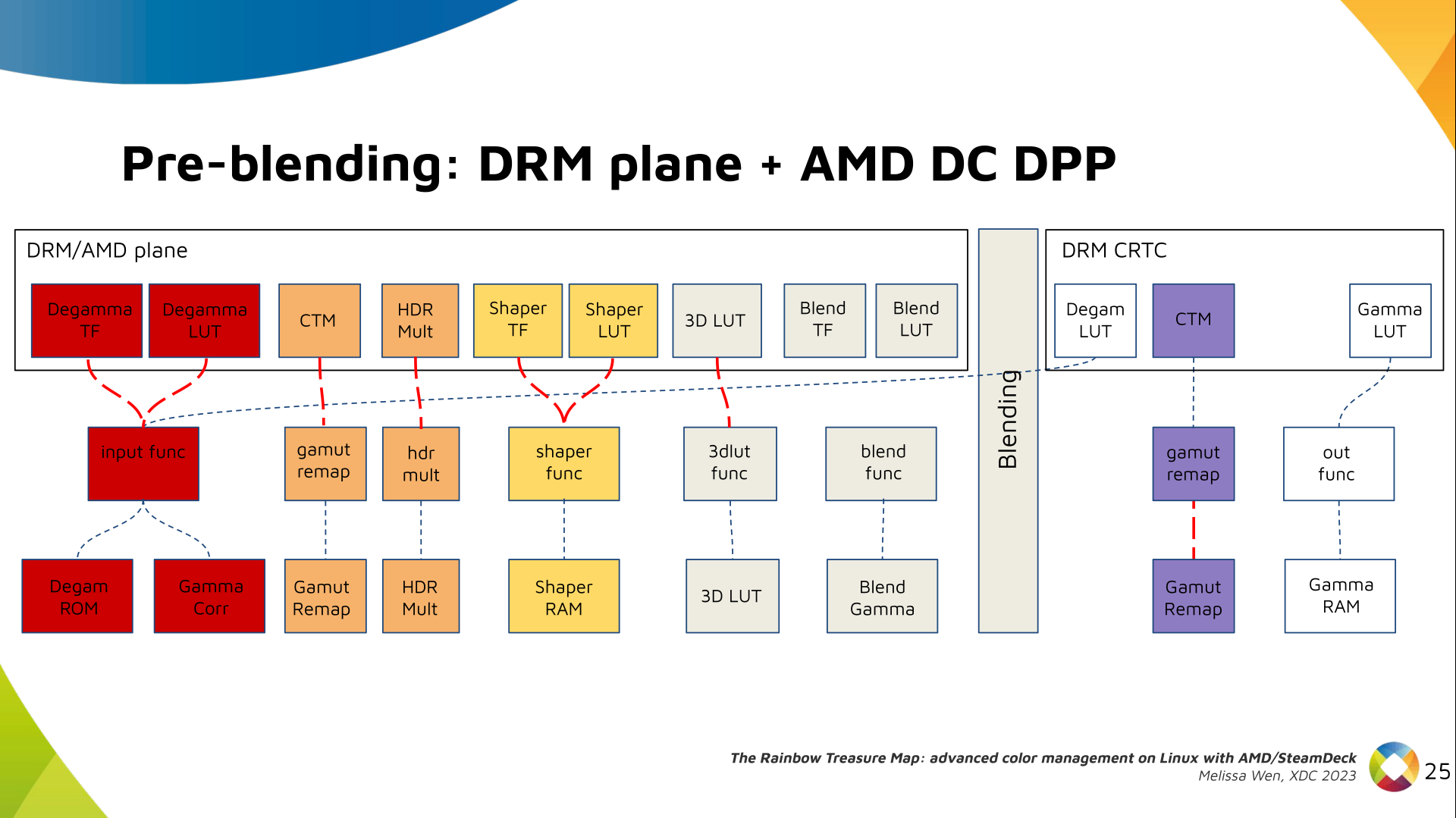

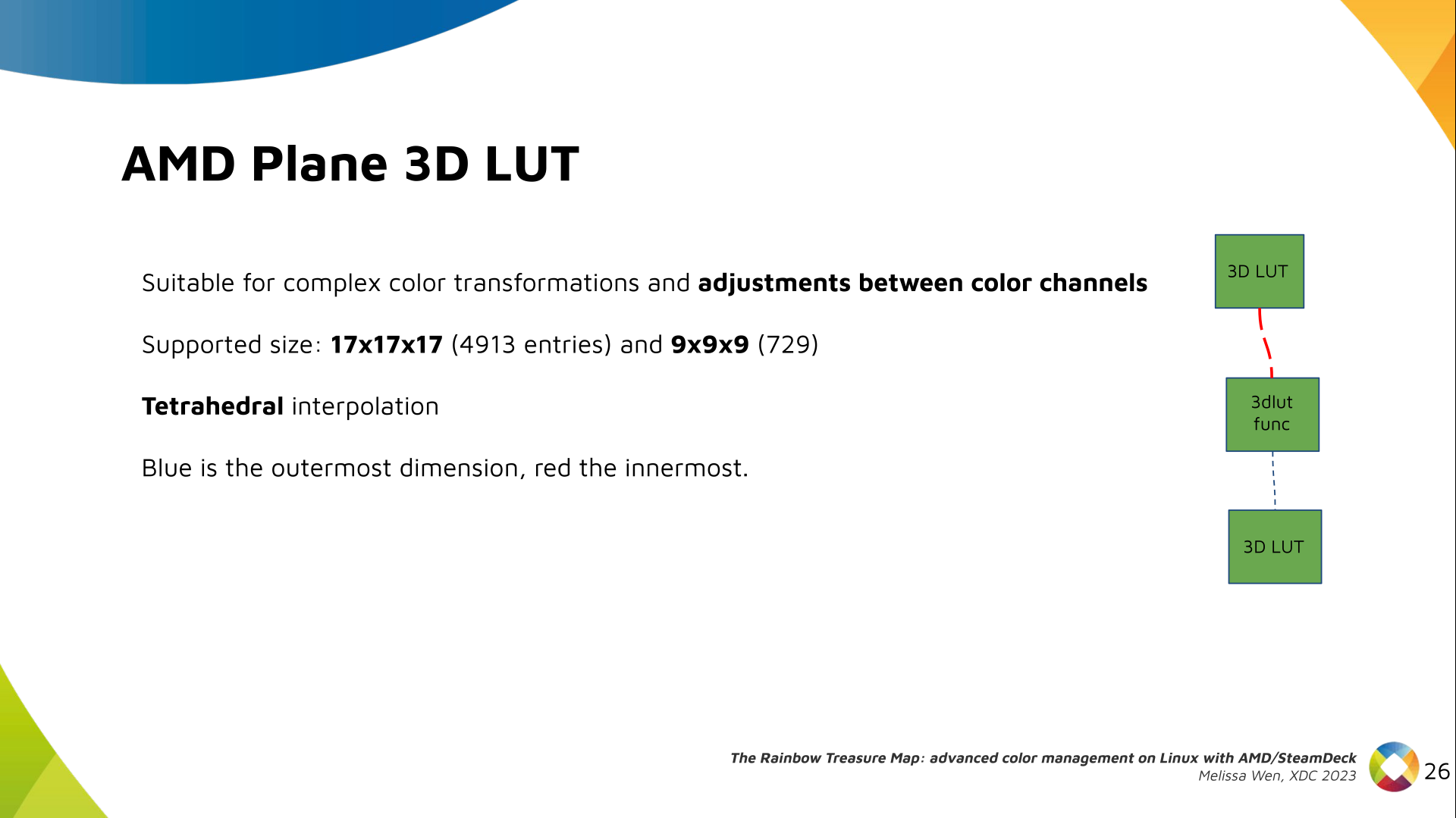

And we need a 3D LUT. But 3D LUT has a limited number of entries in each

dimension, so we want to use it in a colorspace that is optimized for human

vision. It means in a non-linear space. To deliver it, userspace may need one

1D LUT before 3D LUT to delinearize content and another one after to linearize

content again for blending.

And we need a 3D LUT. But 3D LUT has a limited number of entries in each

dimension, so we want to use it in a colorspace that is optimized for human

vision. It means in a non-linear space. To deliver it, userspace may need one

1D LUT before 3D LUT to delinearize content and another one after to linearize

content again for blending.

The pre-3D-LUT curve is called Shaper curve. Unlike Degamma TF, there are no

hardcoded curves for shaper TF, but we can use the AMD color module in the

driver to build the following shaper curves from pre-defined coefficients. The

color module combines the TF and the user LUT values into the LUT that goes to

the DPP Shaper RAM block.

The pre-3D-LUT curve is called Shaper curve. Unlike Degamma TF, there are no

hardcoded curves for shaper TF, but we can use the AMD color module in the

driver to build the following shaper curves from pre-defined coefficients. The

color module combines the TF and the user LUT values into the LUT that goes to

the DPP Shaper RAM block.

Finally, our rockstar, the 3D LUT. 3D LUT is perfect for complex color

transformations and adjustments between color channels.

Finally, our rockstar, the 3D LUT. 3D LUT is perfect for complex color

transformations and adjustments between color channels.

3D LUT is also more complex to manage and requires more computational

resources, as a consequence, its number of entries is usually limited. To

overcome this restriction, the array contains samples from the approximated

function and values between samples are estimated by tetrahedral interpolation.

AMD supports 17 and 9 as the size of a single-dimension. Blue is the outermost

dimension, red the innermost.

3D LUT is also more complex to manage and requires more computational

resources, as a consequence, its number of entries is usually limited. To

overcome this restriction, the array contains samples from the approximated

function and values between samples are estimated by tetrahedral interpolation.

AMD supports 17 and 9 as the size of a single-dimension. Blue is the outermost

dimension, red the innermost.

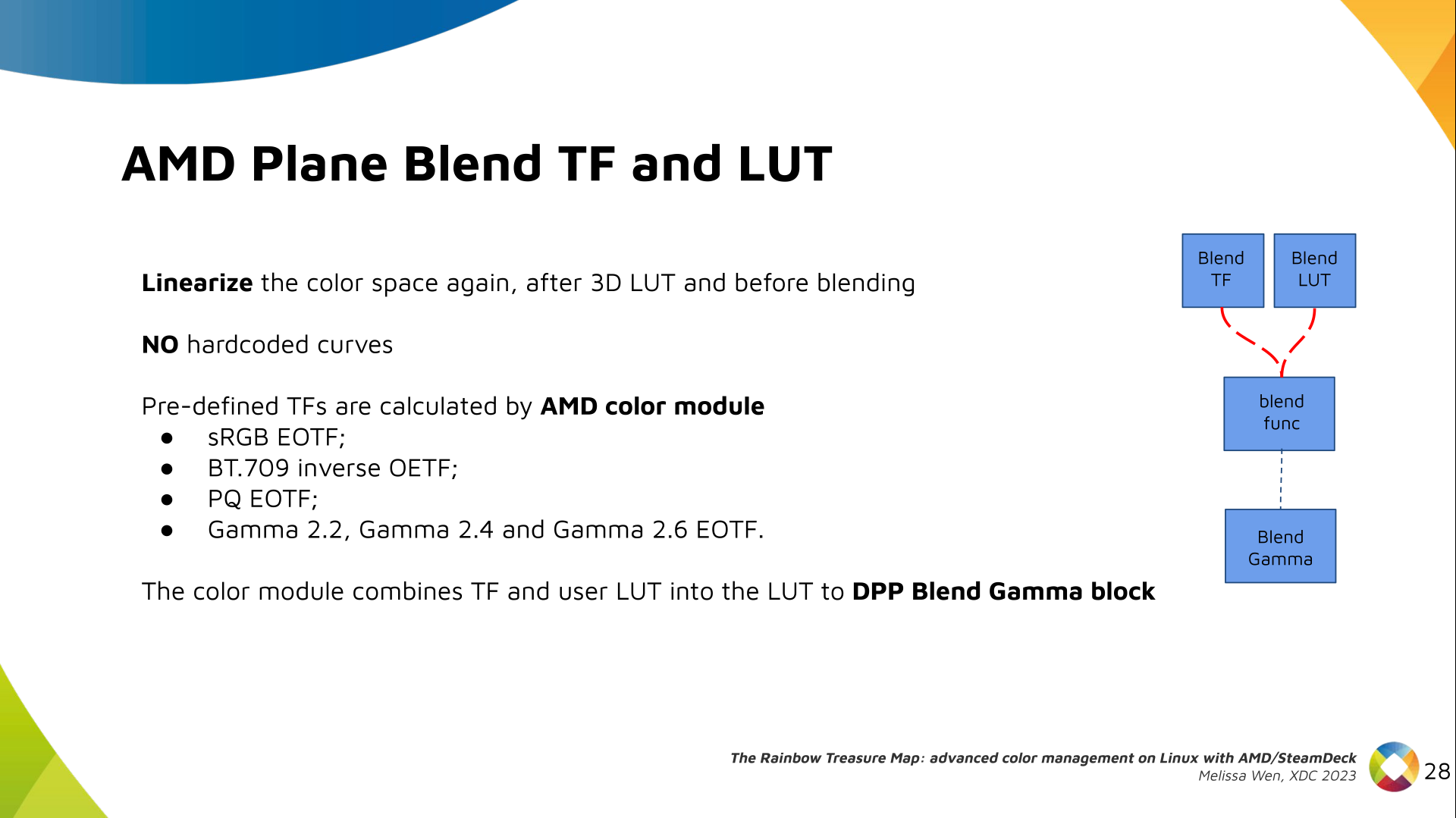

As mentioned, we need a post-3D-LUT curve to linearize the color space before

blending. This is done by Blend TF and LUT.

As mentioned, we need a post-3D-LUT curve to linearize the color space before

blending. This is done by Blend TF and LUT.

Similar to shaper TF, there are no hardcoded curves for Blend TF. The

pre-defined curves are the same as the Degamma block, but calculated by the

color module. The resulting LUT goes to the DPP Blend RAM block.

Similar to shaper TF, there are no hardcoded curves for Blend TF. The

pre-defined curves are the same as the Degamma block, but calculated by the

color module. The resulting LUT goes to the DPP Blend RAM block.

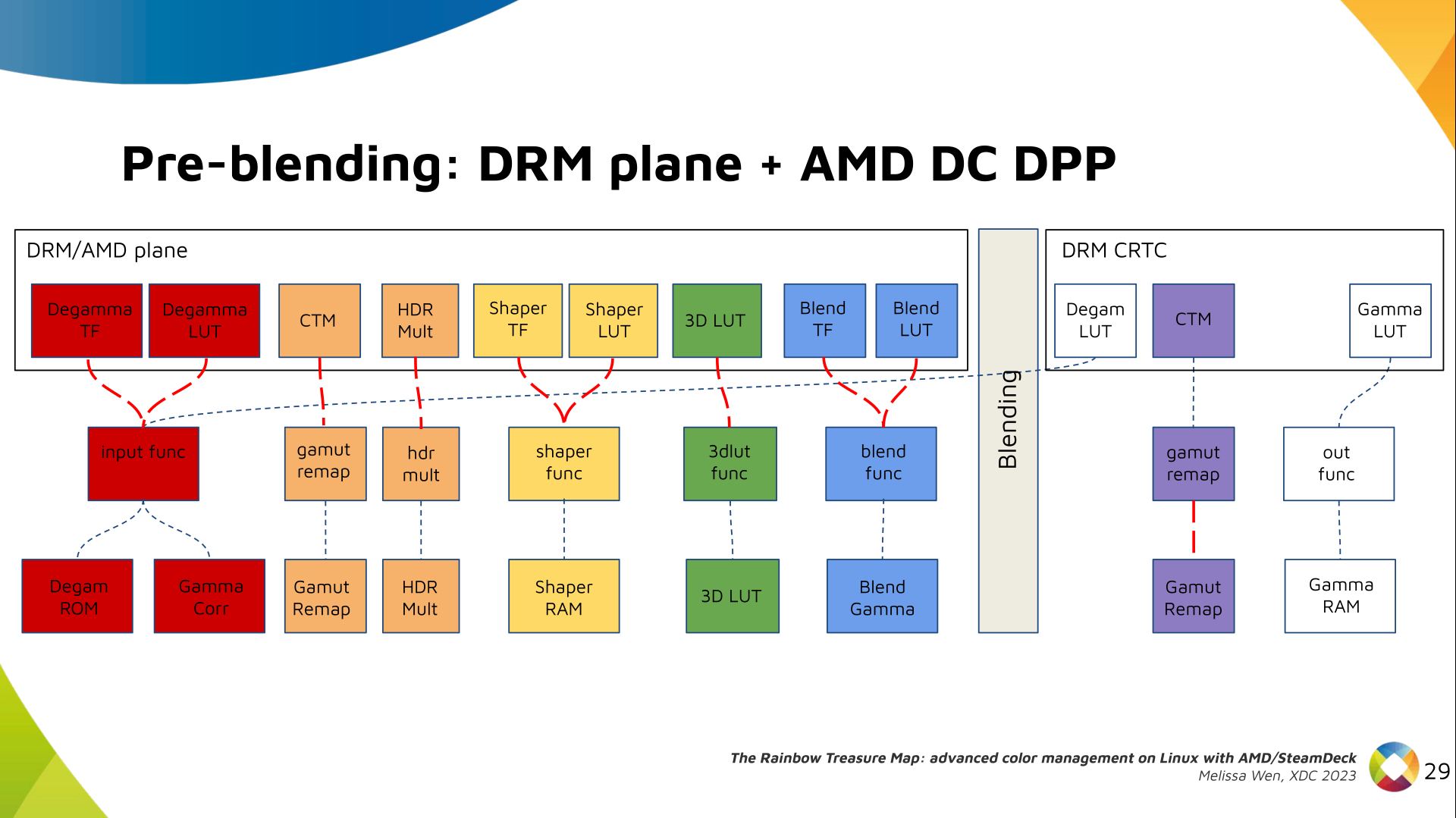

Now we have everything connected before blending. As a conflict between plane

and CRTC Degamma was inevitable, our approach doesn t accept that both are set

at the same time.

Now we have everything connected before blending. As a conflict between plane

and CRTC Degamma was inevitable, our approach doesn t accept that both are set

at the same time.

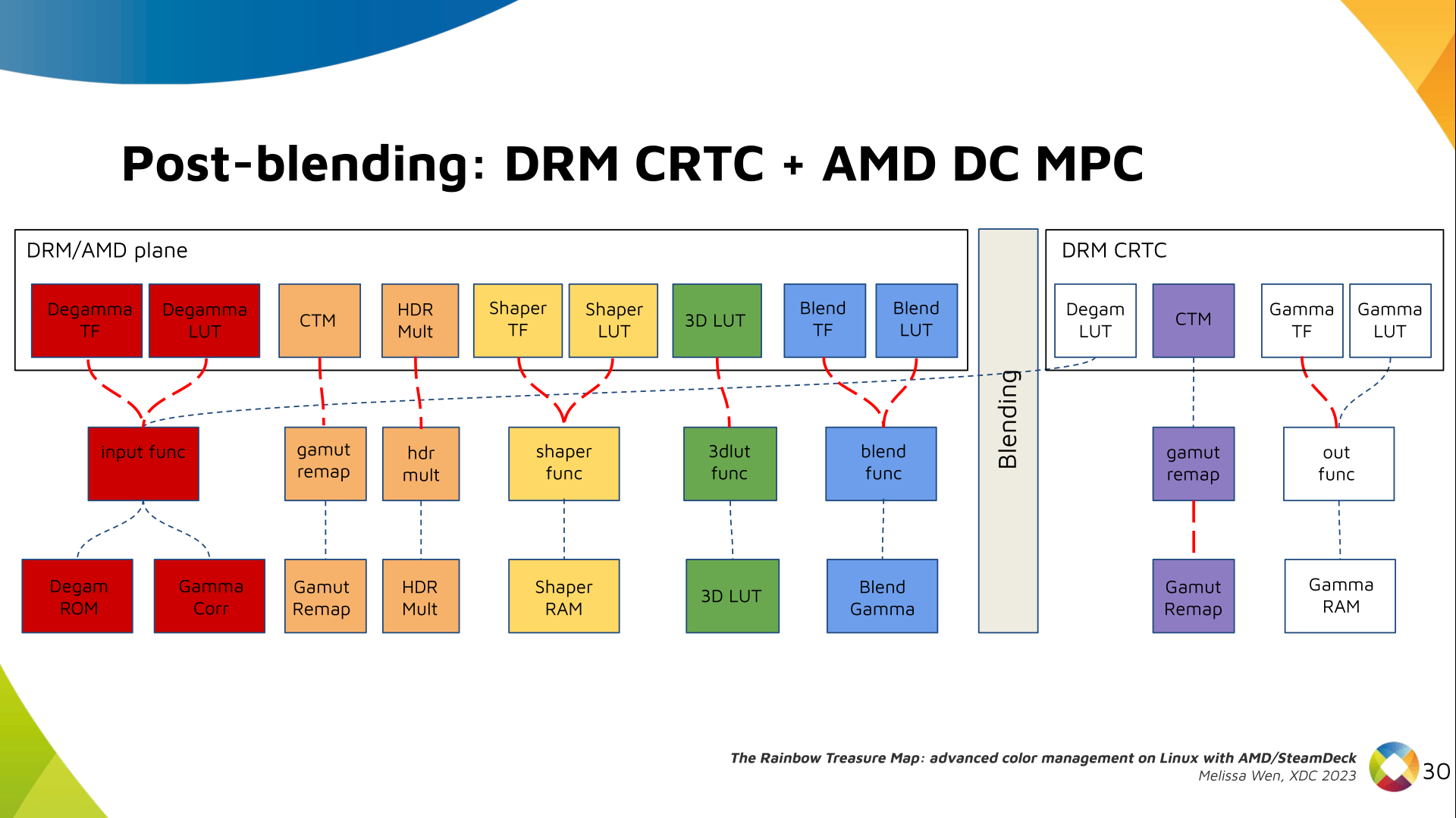

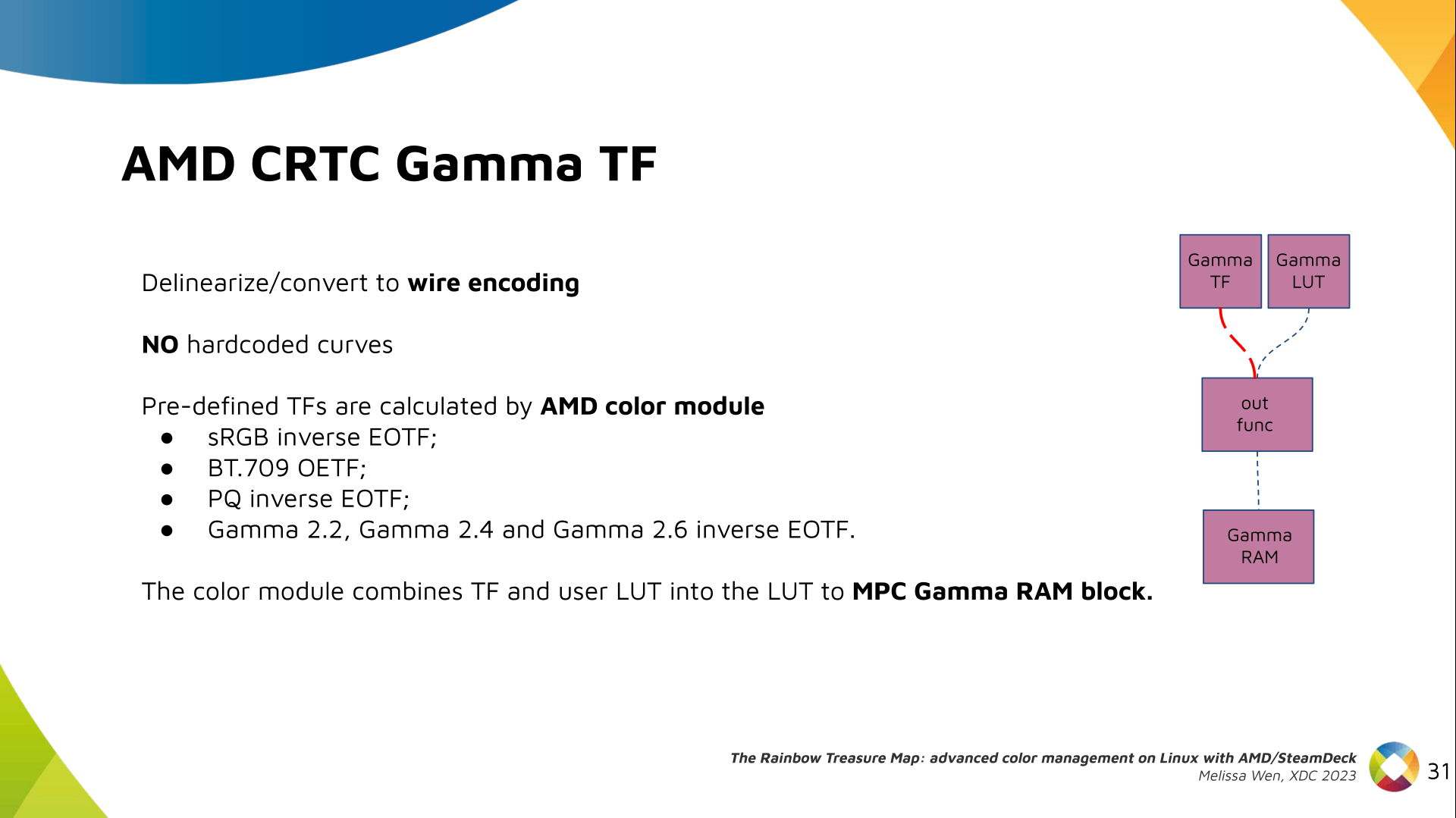

We also optimized the conversion of the framebuffer to wire encoding by adding

support to pre-defined CRTC Gamma TF.

We also optimized the conversion of the framebuffer to wire encoding by adding

support to pre-defined CRTC Gamma TF.

Again, there are no hardcoded curves and TF and LUT are combined by the AMD

color module. The same types of shaper curves are supported. The resulting LUT

goes to the MPC Gamma RAM block.

Again, there are no hardcoded curves and TF and LUT are combined by the AMD

color module. The same types of shaper curves are supported. The resulting LUT

goes to the MPC Gamma RAM block.

Finally, we arrived in the final version of DRM/AMD driver-specific color

management pipeline. With this knowledge, you re ready to better enjoy the

rainbow treasure of AMD display hardware and the world of graphics computing.

Finally, we arrived in the final version of DRM/AMD driver-specific color

management pipeline. With this knowledge, you re ready to better enjoy the

rainbow treasure of AMD display hardware and the world of graphics computing.

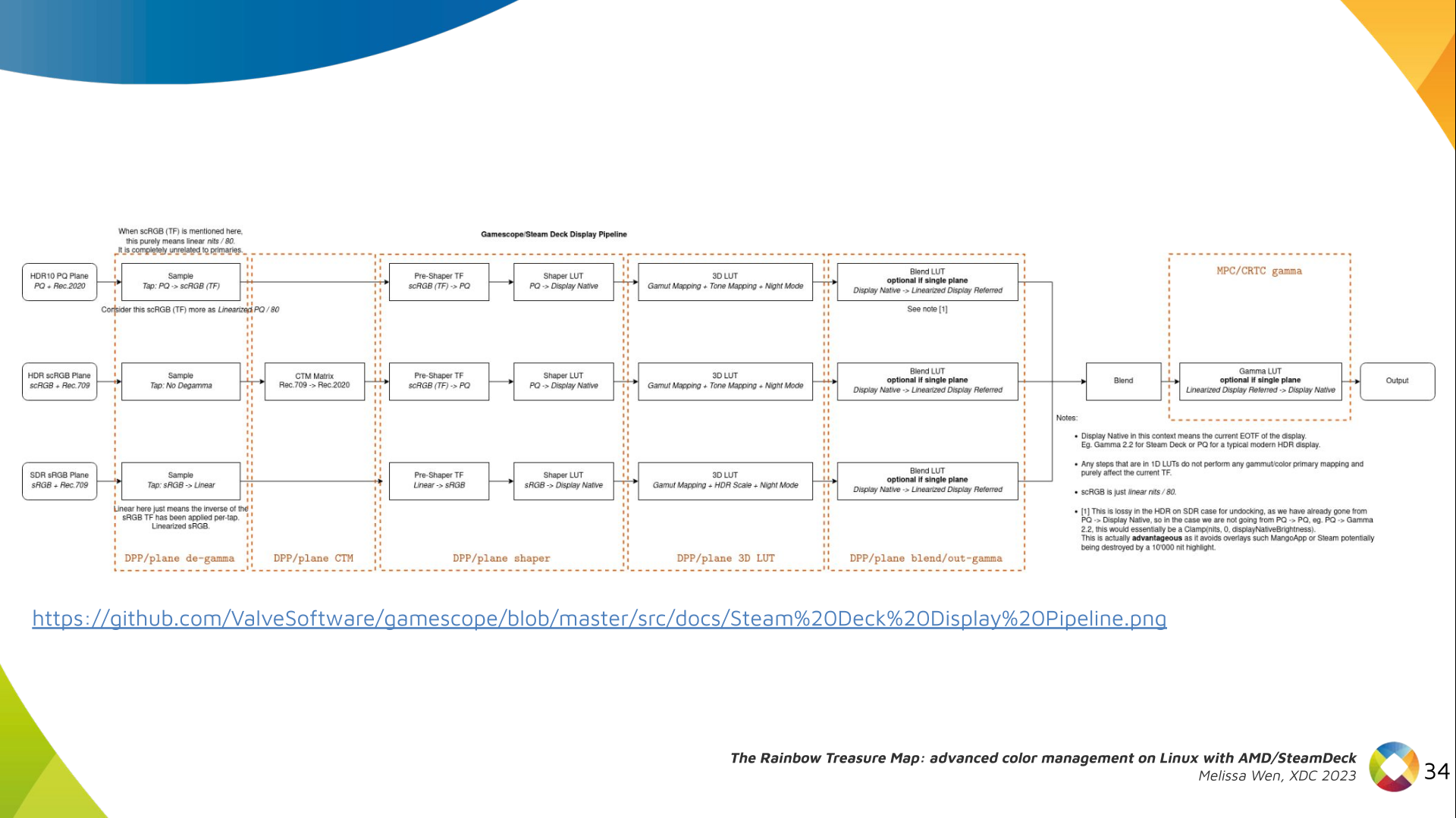

With this work, Gamescope/Steam Deck embraces the color capabilities of the AMD

GPU. We highlight here how we map the Gamescope color pipeline to each AMD

color block.

With this work, Gamescope/Steam Deck embraces the color capabilities of the AMD

GPU. We highlight here how we map the Gamescope color pipeline to each AMD

color block.

Future works:

The search for the rainbow treasure is not over! The Linux DRM subsystem

contains many hidden treasures from different vendors. We want more complex

color transformations and adjustments available on Linux. We also want to

expose all GPU color capabilities from all hardware vendors to the Linux

userspace.

Thanks Joshua and Harry for this joint work and the Linux DRI community for all feedback and reviews.

The amazing part of this work comes in the next talk with Joshua and The Rainbow Frogs!

Any questions?

Future works:

The search for the rainbow treasure is not over! The Linux DRM subsystem

contains many hidden treasures from different vendors. We want more complex

color transformations and adjustments available on Linux. We also want to

expose all GPU color capabilities from all hardware vendors to the Linux

userspace.

Thanks Joshua and Harry for this joint work and the Linux DRI community for all feedback and reviews.

The amazing part of this work comes in the next talk with Joshua and The Rainbow Frogs!

Any questions?

soft_spam/ folder.procmailrc:

# Use spamassassin to check for spam

:0fw: .spamassassin.lock

/usr/bin/spamassassin

# Throw away messages with a score of > 12.0

:0

* ^X-Spam-Level: \*\*\*\*\*\*\*\*\*\*\*\*

/dev/null

:0:

* ^X-Spam-Status: Yes

$HOME/Mail/soft_spam/

# Deliver all other messages

:0:

$ DEFAULT

~/.muttrc configuration to easily report false

negatives/positives and examine my likely spam folder via a shortcut in

mutt:

unignore X-Spam-Level

unignore X-Spam-Status

macro index S "c=soft_spam/\n" "Switch to soft_spam"

# Tell mutt about SpamAssassin headers so that I can sort by spam score

spam "X-Spam-Status: (Yes No), (hits score)=(-?[0-9]+\.[0-9])" "%3"

folder-hook =soft_spam 'push ol'

folder-hook =spam 'push ou'

# <Esc>d = de-register as non-spam, register as spam, move to spam folder.

macro index \ed "<enter-command>unset wait_key\n<pipe-entry>spamassassin -r\n<enter-command>set wait_key\n<save-message>=spam\n" "report the message as spam"

# <Esc>u = unregister as spam, register as non-spam, move to inbox folder.

macro index \eu "<enter-command>unset wait_key\n<pipe-entry>spamassassin -k\n<enter-command>set wait_key\n<save-message>=inbox\n" "correct the false positive (this is not spam)"

bugs.debian.org and

lists.debian.org.

Note this second one includes archived copies of some of the SARE rules and

so I only use some of the rules in the common/ directory.

Finally, I wrote a few custom rules of my

own based

on specific kinds of emails I have seen slip through the cracks. I haven't

written any of those in a long time and I suspect some of my rules are now

obsolete. You may want to do your own testing before you copy these outright.

In addition to rules to match more spam, I've also written a ruleset to

remove false positives in French

emails

coming from many of the above custom rules. I also wrote a rule to get a

bonus to any email that comes with a patch:

describe FM_PATCH Includes a patch

body FM_PATCH /\bdiff -pruN\b/

score FM_PATCH -1.0

/etc/spamassassin/, I enable the following plugins:

loadplugin Mail::SpamAssassin::Plugin::AntiVirus

loadplugin Mail::SpamAssassin::Plugin::AskDNS

loadplugin Mail::SpamAssassin::Plugin::ASN

loadplugin Mail::SpamAssassin::Plugin::AutoLearnThreshold

loadplugin Mail::SpamAssassin::Plugin::Bayes

loadplugin Mail::SpamAssassin::Plugin::BodyEval

loadplugin Mail::SpamAssassin::Plugin::Check

loadplugin Mail::SpamAssassin::Plugin::DKIM

loadplugin Mail::SpamAssassin::Plugin::DNSEval

loadplugin Mail::SpamAssassin::Plugin::FreeMail

loadplugin Mail::SpamAssassin::Plugin::FromNameSpoof

loadplugin Mail::SpamAssassin::Plugin::HashBL

loadplugin Mail::SpamAssassin::Plugin::HeaderEval

loadplugin Mail::SpamAssassin::Plugin::HTMLEval

loadplugin Mail::SpamAssassin::Plugin::HTTPSMismatch

loadplugin Mail::SpamAssassin::Plugin::ImageInfo

loadplugin Mail::SpamAssassin::Plugin::MIMEEval

loadplugin Mail::SpamAssassin::Plugin::MIMEHeader

loadplugin Mail::SpamAssassin::Plugin::OLEVBMacro

loadplugin Mail::SpamAssassin::Plugin::PDFInfo

loadplugin Mail::SpamAssassin::Plugin::Phishing

loadplugin Mail::SpamAssassin::Plugin::Pyzor

loadplugin Mail::SpamAssassin::Plugin::Razor2

loadplugin Mail::SpamAssassin::Plugin::RelayEval

loadplugin Mail::SpamAssassin::Plugin::ReplaceTags

loadplugin Mail::SpamAssassin::Plugin::Rule2XSBody

loadplugin Mail::SpamAssassin::Plugin::SpamCop

loadplugin Mail::SpamAssassin::Plugin::TextCat

loadplugin Mail::SpamAssassin::Plugin::TxRep

loadplugin Mail::SpamAssassin::Plugin::URIDetail

loadplugin Mail::SpamAssassin::Plugin::URIEval

loadplugin Mail::SpamAssassin::Plugin::VBounce

loadplugin Mail::SpamAssassin::Plugin::WelcomeListSubject

loadplugin Mail::SpamAssassin::Plugin::WLBLEval

*.pre files.

My ~/.spamassassin/user_prefs file contains the following configuration:

required_hits 5

ok_locales en fr

# Bayes options

score BAYES_00 -4.0

score BAYES_40 -0.5

score BAYES_60 1.0

score BAYES_80 2.7

score BAYES_95 4.0

score BAYES_99 6.0

bayes_auto_learn 1

bayes_ignore_header X-Miltered

bayes_ignore_header X-MIME-Autoconverted

bayes_ignore_header X-Evolution

bayes_ignore_header X-Virus-Scanned

bayes_ignore_header X-Forwarded-For

bayes_ignore_header X-Forwarded-By

bayes_ignore_header X-Scanned-By

bayes_ignore_header X-Spam-Level

bayes_ignore_header X-Spam-Status

FuzzyOCR

package installed since it has

occasionally flagged some spam that other tools had missed. It is a little

resource intensive though and so you may want to avoid this one if you are

filtering spam for other people.

As always, feel free to leave a comment if you do something else that works

well and that's not included in my setup. This is a work-in-progress.

drm-fixes-<date>.

2) Examine the issue tracker: Confirm that your issue isn t already

documented and addressed in the AMD display driver issue tracker. If you find a

similar issue, you can team up with others and speed up the debugging process.

[drm] Display Core v... , it s not likely a display driver issue. If this

message doesn t appear in your log, the display driver wasn t fully loaded and

you will see a notification that something went wrong here.[drm] Display Core v3.2.241 initialized on DCN 2.1[drm] Display Core v3.2.237 initialized on DCN 3.0.1drivers/gpu/drm/amd/display/dc/dcn301. We all know

that the AMD s shared code is huge and you can use these boundaries to rule out

codes unrelated to your issue.

7) Newer families may inherit code from older ones: you can find dcn301

using code from dcn30, dcn20, dcn10 files. It s crucial to verify which hooks

and helpers your driver utilizes to investigate the right portion. You can

leverage ftrace for supplemental validation. To give an example, it was

useful when I was updating DCN3 color mapping to correctly use their new

post-blending color capabilities, such as:

Additionally, you can use two different HW families to compare behaviours.

If you see the issue in one but not in the other, you can compare the code and

understand what has changed and if the implementation from a previous family

doesn t fit well the new HW resources or design. You can also count on the help

of the community on the

Linux AMD issue tracker

to validate your code on other hardware and/or systems.

This approach helped me debug

a 2-year-old issue

where the cursor gamma adjustment was incorrect in DCN3 hardware, but working

correctly for DCN2 family. I solved the issue in two steps, thanks for

community feedback and validation:

drivers/gpu/drm/amd/display/dc/dcn*/dcn*_resource.c file. More precisely in

the dcn*_resource_construct() function.

Using DCN301 for illustration, here is the list of its hardware caps:

/*************************************************

* Resource + asic cap harcoding *

*************************************************/

pool->base.underlay_pipe_index = NO_UNDERLAY_PIPE;

pool->base.pipe_count = pool->base.res_cap->num_timing_generator;

pool->base.mpcc_count = pool->base.res_cap->num_timing_generator;

dc->caps.max_downscale_ratio = 600;

dc->caps.i2c_speed_in_khz = 100;

dc->caps.i2c_speed_in_khz_hdcp = 5; /*1.4 w/a enabled by default*/

dc->caps.max_cursor_size = 256;

dc->caps.min_horizontal_blanking_period = 80;

dc->caps.dmdata_alloc_size = 2048;

dc->caps.max_slave_planes = 2;

dc->caps.max_slave_yuv_planes = 2;

dc->caps.max_slave_rgb_planes = 2;

dc->caps.is_apu = true;

dc->caps.post_blend_color_processing = true;

dc->caps.force_dp_tps4_for_cp2520 = true;

dc->caps.extended_aux_timeout_support = true;

dc->caps.dmcub_support = true;

/* Color pipeline capabilities */

dc->caps.color.dpp.dcn_arch = 1;

dc->caps.color.dpp.input_lut_shared = 0;

dc->caps.color.dpp.icsc = 1;

dc->caps.color.dpp.dgam_ram = 0; // must use gamma_corr

dc->caps.color.dpp.dgam_rom_caps.srgb = 1;

dc->caps.color.dpp.dgam_rom_caps.bt2020 = 1;

dc->caps.color.dpp.dgam_rom_caps.gamma2_2 = 1;

dc->caps.color.dpp.dgam_rom_caps.pq = 1;

dc->caps.color.dpp.dgam_rom_caps.hlg = 1;

dc->caps.color.dpp.post_csc = 1;

dc->caps.color.dpp.gamma_corr = 1;

dc->caps.color.dpp.dgam_rom_for_yuv = 0;

dc->caps.color.dpp.hw_3d_lut = 1;

dc->caps.color.dpp.ogam_ram = 1;

// no OGAM ROM on DCN301

dc->caps.color.dpp.ogam_rom_caps.srgb = 0;

dc->caps.color.dpp.ogam_rom_caps.bt2020 = 0;

dc->caps.color.dpp.ogam_rom_caps.gamma2_2 = 0;

dc->caps.color.dpp.ogam_rom_caps.pq = 0;

dc->caps.color.dpp.ogam_rom_caps.hlg = 0;

dc->caps.color.dpp.ocsc = 0;

dc->caps.color.mpc.gamut_remap = 1;

dc->caps.color.mpc.num_3dluts = pool->base.res_cap->num_mpc_3dlut; //2

dc->caps.color.mpc.ogam_ram = 1;

dc->caps.color.mpc.ogam_rom_caps.srgb = 0;

dc->caps.color.mpc.ogam_rom_caps.bt2020 = 0;

dc->caps.color.mpc.ogam_rom_caps.gamma2_2 = 0;

dc->caps.color.mpc.ogam_rom_caps.pq = 0;

dc->caps.color.mpc.ogam_rom_caps.hlg = 0;

dc->caps.color.mpc.ocsc = 1;

dc->caps.dp_hdmi21_pcon_support = true;

/* read VBIOS LTTPR caps */

if (ctx->dc_bios->funcs->get_lttpr_caps)

enum bp_result bp_query_result;

uint8_t is_vbios_lttpr_enable = 0;

bp_query_result = ctx->dc_bios->funcs->get_lttpr_caps(ctx->dc_bios, &is_vbios_lttpr_enable);

dc->caps.vbios_lttpr_enable = (bp_query_result == BP_RESULT_OK) && !!is_vbios_lttpr_enable;

if (ctx->dc_bios->funcs->get_lttpr_interop)

enum bp_result bp_query_result;

uint8_t is_vbios_interop_enabled = 0;

bp_query_result = ctx->dc_bios->funcs->get_lttpr_interop(ctx->dc_bios, &is_vbios_interop_enabled);

dc->caps.vbios_lttpr_aware = (bp_query_result == BP_RESULT_OK) && !!is_vbios_interop_enabled;

git log and git blame to identify commits

targeting the code section you re interested in.

10) Track regressions: If you re examining the amd-staging-drm-next

branch, check for regressions between DC release versions. These are defined by

DC_VER in the drivers/gpu/drm/amd/display/dc/dc.h file. Alternatively,

find a commit with this format drm/amd/display: 3.2.221 that determines a

display release. It s useful for bisecting. This information helps you

understand how outdated your branch is and identify potential regressions. You

can consider each DC_VER takes around one week to be bumped. Finally, check

testing log of each release in the report provided on the amd-gfx mailing

list, such as this one Tested-by: Daniel Wheeler:

sudo bash -c "echo high > /sys/class/drm/card0/device/power_dpm_force_performance_level"/* Surface update type is used by dc_update_surfaces_and_stream

* The update type is determined at the very beginning of the function based

* on parameters passed in and decides how much programming (or updating) is

* going to be done during the call.

*

* UPDATE_TYPE_FAST is used for really fast updates that do not require much

* logical calculations or hardware register programming. This update MUST be

* ISR safe on windows. Currently fast update will only be used to flip surface

* address.

*

* UPDATE_TYPE_MED is used for slower updates which require significant hw

* re-programming however do not affect bandwidth consumption or clock

* requirements. At present, this is the level at which front end updates

* that do not require us to run bw_calcs happen. These are in/out transfer func

* updates, viewport offset changes, recout size changes and pixel

depth changes.

* This update can be done at ISR, but we want to minimize how often

this happens.

*

* UPDATE_TYPE_FULL is slow. Really slow. This requires us to recalculate our

* bandwidth and clocks, possibly rearrange some pipes and reprogram

anything front

* end related. Any time viewport dimensions, recout dimensions,

scaling ratios or

* gamma need to be adjusted or pipe needs to be turned on (or

disconnected) we do

* a full update. This cannot be done at ISR level and should be a rare event.

* Unless someone is stress testing mpo enter/exit, playing with

colour or adjusting

* underscan we don't expect to see this call at all.

*/

enum surface_update_type

UPDATE_TYPE_FAST, /* super fast, safe to execute in isr */

UPDATE_TYPE_MED, /* ISR safe, most of programming needed, no bw/clk change*/

UPDATE_TYPE_FULL, /* may need to shuffle resources */

;

Source: UMR project documentation

Source: UMR project documentation

sntrup761 related parts with the patch.

The foundation for lattice-based post-quantum algorithms has some uncertainty around it, and I have felt that there is more to the post-quantum story than adding sntrup761 to implementations. Classic McEliece has been mentioned to me a couple of times, and I took some time to learn it and did a cut n paste job of the proposed ISO standard and published draft-josefsson-mceliece in the IETF to make the algorithm easily available to the IETF community. A high-quality implementation of Classic McEliece has been published as libmceliece and I ve been supporting the work of Jan Moj to package libmceliece for Debian, alas it has been stuck in the ftp-master NEW queue for manual review for over two months. The pre-dependencies librandombytes and libcpucycles are available in Debian already.

All that text writing and packaging work set the scene to write some code. When I added support for sntrup761 in libssh, I became familiar with the OpenSSH code base, so it was natural to return to OpenSSH to experiment with a new SSH KEX for Classic McEliece. DJB suggested to pick mceliece6688128 and combine it with the existing X25519+sntrup761 or with plain X25519. While a three-algorithm hybrid between X25519, sntrup761 and mceliece6688128 would be a simple drop-in for those that don t want to lose the benefits offered by sntrup761, I decided to start the journey on a pure combination of X25519 with mceliece6688128. The key combiner in sntrup761x25519 is a simple SHA512 call and the only good I can say about that is that it is simple to describe and implement, and doesn t raise too many questions since it is already deployed.

After procrastinating coding for months, once I sat down to work it only took a couple of hours until I had a successful Classic McEliece SSH connection. I suppose my brain had sorted everything in background before I started. To reproduce it, please try the following in a Debian testing environment (I use podman to get a clean environment).

# podman run -it --rm debian:testing-slim

apt update

apt dist-upgrade -y

apt install -y wget python3 librandombytes-dev libcpucycles-dev gcc make git autoconf libz-dev libssl-dev

cd ~

wget -q -O- https://lib.mceliece.org/libmceliece-20230612.tar.gz tar xfz -

cd libmceliece-20230612/

./configure

make install

ldconfig

cd ..

git clone https://gitlab.com/jas/openssh-portable

cd openssh-portable

git checkout jas/mceliece

autoreconf

./configure # verify 'libmceliece support: yes'

make # CC="cc -DDEBUG_KEX=1 -DDEBUG_KEXDH=1 -DDEBUG_KEXECDH=1"./ssh -Q kex and it should mention mceliece6688128x25519-sha512@openssh.com.

To have it print plenty of debug outputs, you may remove the # character on the final line, but don t use such a build in production.

You can test it as follows:

./ssh-keygen -A # writes to /usr/local/etc/ssh_host_...

# setup public-key based login by running the following:

./ssh-keygen -t rsa -f ~/.ssh/id_rsa -P ""

cat ~/.ssh/id_rsa.pub > ~/.ssh/authorized_keys

adduser --system sshd

mkdir /var/empty

while true; do $PWD/sshd -p 2222 -f /dev/null; done &

./ssh -v -p 2222 localhost -oKexAlgorithms=mceliece6688128x25519-sha512@openssh.com dateOpenSSH_9.5p1, OpenSSL 3.0.11 19 Sep 2023 ... debug1: SSH2_MSG_KEXINIT sent debug1: SSH2_MSG_KEXINIT received debug1: kex: algorithm: mceliece6688128x25519-sha512@openssh.com debug1: kex: host key algorithm: ssh-ed25519 debug1: kex: server->client cipher: chacha20-poly1305@openssh.com MAC: <implicit> compression: none debug1: kex: client->server cipher: chacha20-poly1305@openssh.com MAC: <implicit> compression: none debug1: expecting SSH2_MSG_KEX_ECDH_REPLY debug1: SSH2_MSG_KEX_ECDH_REPLY received debug1: Server host key: ssh-ed25519 SHA256:YognhWY7+399J+/V8eAQWmM3UFDLT0dkmoj3pIJ0zXs ... debug1: Host '[localhost]:2222' is known and matches the ED25519 host key. debug1: Found key in /root/.ssh/known_hosts:1 debug1: rekey out after 134217728 blocks debug1: SSH2_MSG_NEWKEYS sent debug1: expecting SSH2_MSG_NEWKEYS debug1: SSH2_MSG_NEWKEYS received debug1: rekey in after 134217728 blocks ... debug1: Sending command: date debug1: pledge: fork debug1: permanently_set_uid: 0/0 Environment: USER=root LOGNAME=root HOME=/root PATH=/usr/bin:/bin:/usr/sbin:/sbin:/usr/local/bin MAIL=/var/mail/root SHELL=/bin/bash SSH_CLIENT=::1 46894 2222 SSH_CONNECTION=::1 46894 ::1 2222 debug1: client_input_channel_req: channel 0 rtype exit-status reply 0 debug1: client_input_channel_req: channel 0 rtype eow@openssh.com reply 0 Sat Dec 9 22:22:40 UTC 2023 debug1: channel 0: free: client-session, nchannels 1 Transferred: sent 1048044, received 3500 bytes, in 0.0 seconds Bytes per second: sent 23388935.4, received 78108.6 debug1: Exit status 0Notice the

kex: algorithm: mceliece6688128x25519-sha512@openssh.com output.

How about network bandwidth usage? Below is a comparison of a complete SSH client connection such as the one above that log in and print date and logs out. Plain X25519 is around 7kb, X25519 with sntrup761 is around 9kb, and mceliece6688128 with X25519 is around 1MB. Yes, Classic McEliece has large keys, but for many environments, 1MB of data for the session establishment will barely be noticeable.

./ssh -v -p 2222 localhost -oKexAlgorithms=curve25519-sha256 date 2>&1 grep ^Transferred Transferred: sent 3028, received 3612 bytes, in 0.0 seconds ./ssh -v -p 2222 localhost -oKexAlgorithms=sntrup761x25519-sha512@openssh.com date 2>&1 grep ^Transferred Transferred: sent 4212, received 4596 bytes, in 0.0 seconds ./ssh -v -p 2222 localhost -oKexAlgorithms=mceliece6688128x25519-sha512@openssh.com date 2>&1 grep ^Transferred Transferred: sent 1048044, received 3764 bytes, in 0.0 secondsSo how about session establishment time?

date; i=0; while test $i -le 100; do ./ssh -v -p 2222 localhost -oKexAlgorithms=curve25519-sha256 date > /dev/null 2>&1; i= expr $i + 1 ; done; date Sat Dec 9 22:39:19 UTC 2023 Sat Dec 9 22:39:25 UTC 2023 # 6 seconds date; i=0; while test $i -le 100; do ./ssh -v -p 2222 localhost -oKexAlgorithms=sntrup761x25519-sha512@openssh.com date > /dev/null 2>&1; i= expr $i + 1 ; done; date Sat Dec 9 22:39:29 UTC 2023 Sat Dec 9 22:39:38 UTC 2023 # 9 seconds date; i=0; while test $i -le 100; do ./ssh -v -p 2222 localhost -oKexAlgorithms=mceliece6688128x25519-sha512@openssh.com date > /dev/null 2>&1; i= expr $i + 1 ; done; date Sat Dec 9 22:39:55 UTC 2023 Sat Dec 9 22:40:07 UTC 2023 # 12 secondsI never noticed adding

sntrup761, so I m pretty sure I wouldn t notice this increase either. This is all running on my laptop that runs Trisquel so take it with a grain of salt but at least the magnitude is clear.

Future work items include:

sntrup761 on my machine.

Update 2023-12-26: An initial IETF document draft-josefsson-ssh-mceliece-00 published.

A new release 0.4.20 of RQuantLib

arrived at CRAN earlier today,

and has already been uploaded to Debian as well.

QuantLib is a rather

comprehensice free/open-source library for quantitative

finance. RQuantLib

connects (some parts of) it to the R environment and language, and has

been part of CRAN for more than

twenty years (!!) as it was one of the first packages I uploaded

there.

This release of RQuantLib

brings a few more updates for nags triggered by recent changes in the

upcoming R release (aka r-devel , usually due in April). The Rd parser

now identifies curly braces that lack a preceding macro, usually a typo

as it was here which affected three files. The

A new release 0.4.20 of RQuantLib

arrived at CRAN earlier today,

and has already been uploaded to Debian as well.

QuantLib is a rather

comprehensice free/open-source library for quantitative

finance. RQuantLib

connects (some parts of) it to the R environment and language, and has

been part of CRAN for more than

twenty years (!!) as it was one of the first packages I uploaded

there.

This release of RQuantLib

brings a few more updates for nags triggered by recent changes in the

upcoming R release (aka r-devel , usually due in April). The Rd parser

now identifies curly braces that lack a preceding macro, usually a typo

as it was here which affected three files. The printf (or

alike) format checker found two more small issues. The run-time checker

for examples was unhappy with the callable bond example so we only run

it in interactive mode now. Lastly I had alread commented-out the

setting for a C++14 compilation (required by the remaining Boost

headers) as C++14 has been the default since R 4.2.0 (with suitable

compilers, at least). Those who need it explicitly will have to

uncomment the line in src/Makevars.in. Lastly, the expand

printf format strings also found a need for a small change

in Rcpp so the development version

(now 1.0.11.5) has that addressed; the change will be part of Rcpp

1.0.12 in January.

Courtesy of my CRANberries, there is also a diffstat report for the this release 0.4.20. As always, more detailed information is on the RQuantLib page. Questions, comments etc should go to the rquantlib-devel mailing list. Issue tickets can be filed at the GitHub repo. If you like this or other open-source work I do, you can now sponsor me at GitHub.Changes in RQuantLib version 0.4.20 (2023-11-26)

- Correct three help pages with stray curly braces

- Correct two printf format strings

- Comment-out explicit selection of C++14

- Wrap one example inside 'if (interactive())' to not exceed total running time limit at CRAN checks

This post by Dirk Eddelbuettel originated on his Thinking inside the box blog. Please report excessive re-aggregation in third-party for-profit settings.

(click for diagram scans as pdfs).

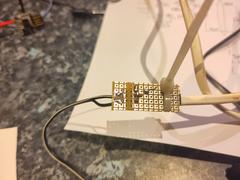

The DigiSpark has just a USB tongue, which is very wobbly in a normal USB socket. I designed a 3D printed case which also had an approximation of the rest of the USB A plug. The plug is out of spec; our printer won t go fine enough - and anyway, the shield is supposed to be metal, not fragile plastic. But it fit in the USB PSU I was using, satisfactorily if a bit stiffly, and also into the connector for programming via my laptop.

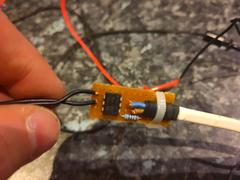

Inside the coffee machine, there s the boundary between the original, coupled to mains, UI board, and the isolated low voltage of the microcontroller. I used a reasonably substantial cable to bring out the low voltage connection, past all the other hazardous innards, to make sure it stays isolated.

I added a drain power supply resistor on another of the GPIOs. This is enabled, with a draw of about 30mA, when the microcontroller is soon going to off / on cycle the coffee machine. That reduces the risk that the user will turn off the smart plug, and turn off the machine, but that the microcontroller turns the coffee machine back on again using the remaining power from USB PSU. Empirically in my setup it reduces the time from smart plug off to microcontroller stops from about 2-3s to more like 1s.

Optoisolator board (inside coffee machine) pictures

(Click through for full size images.)

(click for diagram scans as pdfs).

The DigiSpark has just a USB tongue, which is very wobbly in a normal USB socket. I designed a 3D printed case which also had an approximation of the rest of the USB A plug. The plug is out of spec; our printer won t go fine enough - and anyway, the shield is supposed to be metal, not fragile plastic. But it fit in the USB PSU I was using, satisfactorily if a bit stiffly, and also into the connector for programming via my laptop.

Inside the coffee machine, there s the boundary between the original, coupled to mains, UI board, and the isolated low voltage of the microcontroller. I used a reasonably substantial cable to bring out the low voltage connection, past all the other hazardous innards, to make sure it stays isolated.

I added a drain power supply resistor on another of the GPIOs. This is enabled, with a draw of about 30mA, when the microcontroller is soon going to off / on cycle the coffee machine. That reduces the risk that the user will turn off the smart plug, and turn off the machine, but that the microcontroller turns the coffee machine back on again using the remaining power from USB PSU. Empirically in my setup it reduces the time from smart plug off to microcontroller stops from about 2-3s to more like 1s.

Optoisolator board (inside coffee machine) pictures

(Click through for full size images.)

Microcontroller board (in USB-plug-ish housing) pictures

Microcontroller board (in USB-plug-ish housing) pictures

Implementation - software

I originally used the Arduino IDE, writing my program in C. I had a bad time with that and rewrote it in Rust.

The firmware is in a repository on Debian s gitlab

Results

I can now cause the coffee to start, from my phone. It can be programmed more than 12h in advance. And it stays warm until we ve drunk it.

UI is worse

There s one aspect of the original Morphy Richards machine that I haven t improved: the user interface is still poor. Indeed, it s now even worse:

To turn the machine on, you probably want to turn on the smart plug instead. Unhappily, the power button for that is invisible in its installed location.

In particular, in the usual case, if you want to turn it off, you should ideally turn off both the smart plug (which can be done with the button on it) and the coffee machine itself. If you forget to turn off the smart plug, the machine can end up being turned on, very briefly, a handful of times, over the next hour or two.

Epilogue

We had used the new features a handful of times when one morning the coffee machine just wouldn t make coffee. The UI showed it turning on, but it wouldn t get hot, so no coffee. I thought oh no, I ve broken it!

But, on investigation, I found that the machine s heating element was open circuit (ie, completely broken). I didn t mess with that part. So, hooray! Not my fault. Probably, just being inverted a number of times and generally lightly jostled, had precipitated a latent fault. The machine was a number of years old.

Happily I found a replacement, identical, machine, online. I ve transplanted my modification and now it all works well.

Bonus pictures

(Click through for full size images.)

Implementation - software

I originally used the Arduino IDE, writing my program in C. I had a bad time with that and rewrote it in Rust.

The firmware is in a repository on Debian s gitlab

Results

I can now cause the coffee to start, from my phone. It can be programmed more than 12h in advance. And it stays warm until we ve drunk it.

UI is worse

There s one aspect of the original Morphy Richards machine that I haven t improved: the user interface is still poor. Indeed, it s now even worse:

To turn the machine on, you probably want to turn on the smart plug instead. Unhappily, the power button for that is invisible in its installed location.

In particular, in the usual case, if you want to turn it off, you should ideally turn off both the smart plug (which can be done with the button on it) and the coffee machine itself. If you forget to turn off the smart plug, the machine can end up being turned on, very briefly, a handful of times, over the next hour or two.

Epilogue

We had used the new features a handful of times when one morning the coffee machine just wouldn t make coffee. The UI showed it turning on, but it wouldn t get hot, so no coffee. I thought oh no, I ve broken it!

But, on investigation, I found that the machine s heating element was open circuit (ie, completely broken). I didn t mess with that part. So, hooray! Not my fault. Probably, just being inverted a number of times and generally lightly jostled, had precipitated a latent fault. The machine was a number of years old.

Happily I found a replacement, identical, machine, online. I ve transplanted my modification and now it all works well.

Bonus pictures

(Click through for full size images.)

edited 2023-11-26 14:59 UTC in an attempt to fix TOC links

edited 2023-11-26 14:59 UTC in an attempt to fix TOC links This week I stumbled across a footgun in the Dockerfile/Containerfile

This week I stumbled across a footgun in the Dockerfile/Containerfile ARG

instruction.

ARG is used to define a build-time variable, possibly with a default value

embedded in the Dockerfile, which can be overridden at build-time (by passing

--build-arg). The value of a variable FOO is interpolated into any following

instructions that include the token $FOO.

This behaves a little similar to the existing instruction ENV, which, for

RUN instructions at least, can also be interpolated, but can't (I don't

think) be set at build time, and bleeds through to the resulting image

metadata.

ENV has been around longer, and the documentation indicates that, when both

are present, ENV takes precedence. This fits with my mental model of how

things should work, but, what the documentation does not make clear is, the

ENV doesn't need to have been defined in the same Dockerfile: environment

variables inherited from the base image also override ARGs.

To me this is unexpected and far less sensible: in effect, if you are building

a layered image and want to use ARG, you have to be fairly sure that your

base image doesn't define an ENV of the same name, either now or in the

future, unless you're happy for their value to take precedence.

In our case, we broke a downstream build process by defining a new environment

variable USER in our image.

To defend against the unexpected, I'd recommend using somewhat unique ARG

names: perhaps prefix something unusual and unlikely to be shadowed. Or don't

use ARG at all, and push that kind of logic up the stack to a Dockerfile

pre-processor like CeKit.

|

| Photo by Pixabay |

Given a typical install of 3 generic kernel ABIs in the default configuration on a regular-sized VM (2 CPU cores 8GB of RAM) the following metrics are achieved in Ubuntu 23.10 versus Ubuntu 22.04 LTS:

2x less disk space used (1,417MB vs 2,940MB, including initrd)

3x less peak RAM usage for the initrd boot (68MB vs 204MB)

0.5x increase in download size (949MB vs 600MB)

2.5x faster initrd generation (4.5s vs 11.3s)

approximately the same total time (103s vs 98s, hardware dependent)

For minimal cloud images that do not install either linux-firmware or modules extra the numbers are:

1.3x less disk space used (548MB vs 742MB)

2.2x less peak RAM usage for initrd boot (27MB vs 62MB)

0.4x increase in download size (207MB vs 146MB)

Hopefully, the compromise of download size, relative to the disk space & initrd savings is a win for the majority of platforms and use cases. For users on extremely expensive and metered connections, the likely best saving is to receive air-gapped updates or skip updates.

This was achieved by precompressing kernel modules & firmware files with the maximum level of Zstd compression at package build time; making actual .deb files uncompressed; assembling the initrd using split cpio archives - uncompressed for the pre-compressed files, whilst compressing only the userspace portions of the initrd; enabling in-kernel module decompression support with matching kmod; fixing bugs in all of the above, and landing all of these things in time for the feature freeze. Whilst leveraging the experience and some of the design choices implementations we have already been shipping on Ubuntu Core. Some of these changes are backported to Jammy, but only enough to support smooth upgrades to Mantic and later. Complete gains are only possible to experience on Mantic and later.

The discovered bugs in kernel module loading code likely affect systems that use LoadPin LSM with kernel space module uncompression as used on ChromeOS systems. Hopefully, Kees Cook or other ChromeOS developers pick up the kernel fixes from the stable trees. Or you know, just use Ubuntu kernels as they do get fixes and features like these first.

The team that designed and delivered these changes is large: Benjamin Drung, Andrea Righi, Juerg Haefliger, Julian Andres Klode, Steve Langasek, Michael Hudson-Doyle, Robert Kratky, Adrien Nader, Tim Gardner, Roxana Nicolescu - and myself Dimitri John Ledkov ensuring the most optimal solution is implemented, everything lands on time, and even implementing portions of the final solution.

Hi, It's me, I am a Staff Engineer at Canonical and we are hiring https://canonical.com/careers.

Lots of additional technical details and benchmarks on a huge range of diverse hardware and architectures, and bikeshedding all the things below:

Like each month, have a look at the work funded by Freexian s Debian LTS offering.

Like each month, have a look at the work funded by Freexian s Debian LTS offering.

Ubuntu 23.10 Mantic Minotaur Desktop, showing network settings

We released Ubuntu 23.10 Mantic Minotaur on 12 October 2023, shipping its proven and trusted network stack based on Netplan. Netplan is the default tool to configure Linux networking on Ubuntu since 2016. In the past, it was primarily used to control the Server and Cloud variants of Ubuntu, while on Desktop systems it would hand over control to NetworkManager. In Ubuntu 23.10 this disparity in how to control the network stack on different Ubuntu platforms was closed by integrating NetworkManager with the underlying Netplan stack.

Netplan could already be used to describe network connections on Desktop systems managed by NetworkManager. But network connections created or modified through NetworkManager would not be known to Netplan, so it was a one-way street. Activating the bidirectional NetworkManager-Netplan integration allows for any configuration change made through NetworkManager to be propagated back into Netplan. Changes made in Netplan itself will still be visible in NetworkManager, as before. This way, Netplan can be considered the single source of truth for network configuration across all variants of Ubuntu, with the network configuration stored in /etc/netplan/, using Netplan s common and declarative YAML format.

/etc/netplan/. This way, the only thing administrators need to care about when managing a fleet of Desktop installations is Netplan. Furthermore, programmatic access to all network configuration is now easily accessible to other system components integrating with Netplan, such as snapd. This solution has already been used in more confined environments, such as Ubuntu Core and is now enabled by default on Ubuntu 23.10 Desktop.

/etc/NetworkManager/system-connections/ will automatically and transparently be migrated to Netplan s declarative YAML format and stored in its common configuration directory /etc/netplan/.

The same migration will happen in the background whenever you add or modify any connection profile through the NetworkManager user interface, integrated with GNOME Shell. From this point on, Netplan will be aware of your entire network configuration and you can query it using its CLI tools, such as sudo netplan get or sudo netplan status without interrupting traditional NetworkManager workflows (UI, nmcli, nmtui, D-Bus APIs). You can observe this migration on the apt-get command line, watching out for logs like the following:

Setting up network-manager (1.44.2-1ubuntu1.1) ...

Migrating HomeNet (9d087126-ae71-4992-9e0a-18c5ea92a4ed) to /etc/netplan

Migrating eduroam (37d643bb-d81d-4186-9402-7b47632c59b1) to /etc/netplan

Migrating DebConf (f862be9c-fb06-4c0f-862f-c8e210ca4941) to /etc/netplan/usr/bin/jq -r '."key"' ~/.config/Signal/config.jsonAssuming the result from that command is 'secretkey', which is a hexadecimal number representing the key used to encrypt the database. Next, one can now connect to the database and inject the encryption key for access via SQL to fetch information from the database. Here is an example dumping the database structure:

% sqlcipher ~/.config/Signal/sql/db.sqlite

sqlite> PRAGMA key = "x'secretkey'";

sqlite> .schema

CREATE TABLE sqlite_stat1(tbl,idx,stat);

CREATE TABLE conversations(

id STRING PRIMARY KEY ASC,

json TEXT,

active_at INTEGER,

type STRING,

members TEXT,

name TEXT,

profileName TEXT

, profileFamilyName TEXT, profileFullName TEXT, e164 TEXT, serviceId TEXT, groupId TEXT, profileLastFetchedAt INTEGER);

CREATE TABLE identityKeys(

id STRING PRIMARY KEY ASC,

json TEXT

);

CREATE TABLE items(

id STRING PRIMARY KEY ASC,

json TEXT

);

CREATE TABLE sessions(

id TEXT PRIMARY KEY,

conversationId TEXT,

json TEXT

, ourServiceId STRING, serviceId STRING);

CREATE TABLE attachment_downloads(

id STRING primary key,

timestamp INTEGER,

pending INTEGER,

json TEXT

);

CREATE TABLE sticker_packs(

id TEXT PRIMARY KEY,

key TEXT NOT NULL,

author STRING,

coverStickerId INTEGER,

createdAt INTEGER,

downloadAttempts INTEGER,

installedAt INTEGER,

lastUsed INTEGER,

status STRING,

stickerCount INTEGER,

title STRING

, attemptedStatus STRING, position INTEGER DEFAULT 0 NOT NULL, storageID STRING, storageVersion INTEGER, storageUnknownFields BLOB, storageNeedsSync

INTEGER DEFAULT 0 NOT NULL);

CREATE TABLE stickers(

id INTEGER NOT NULL,

packId TEXT NOT NULL,

emoji STRING,

height INTEGER,

isCoverOnly INTEGER,

lastUsed INTEGER,

path STRING,

width INTEGER,

PRIMARY KEY (id, packId),

CONSTRAINT stickers_fk

FOREIGN KEY (packId)

REFERENCES sticker_packs(id)

ON DELETE CASCADE

);

CREATE TABLE sticker_references(

messageId STRING,

packId TEXT,

CONSTRAINT sticker_references_fk

FOREIGN KEY(packId)

REFERENCES sticker_packs(id)

ON DELETE CASCADE

);

CREATE TABLE emojis(

shortName TEXT PRIMARY KEY,

lastUsage INTEGER

);

CREATE TABLE messages(

rowid INTEGER PRIMARY KEY ASC,

id STRING UNIQUE,

json TEXT,

readStatus INTEGER,

expires_at INTEGER,

sent_at INTEGER,

schemaVersion INTEGER,

conversationId STRING,

received_at INTEGER,

source STRING,

hasAttachments INTEGER,

hasFileAttachments INTEGER,

hasVisualMediaAttachments INTEGER,

expireTimer INTEGER,

expirationStartTimestamp INTEGER,

type STRING,

body TEXT,

messageTimer INTEGER,

messageTimerStart INTEGER,

messageTimerExpiresAt INTEGER,

isErased INTEGER,

isViewOnce INTEGER,

sourceServiceId TEXT, serverGuid STRING NULL, sourceDevice INTEGER, storyId STRING, isStory INTEGER

GENERATED ALWAYS AS (type IS 'story'), isChangeCreatedByUs INTEGER NOT NULL DEFAULT 0, isTimerChangeFromSync INTEGER

GENERATED ALWAYS AS (

json_extract(json, '$.expirationTimerUpdate.fromSync') IS 1

), seenStatus NUMBER default 0, storyDistributionListId STRING, expiresAt INT

GENERATED ALWAYS

AS (ifnull(

expirationStartTimestamp + (expireTimer * 1000),

9007199254740991

)), shouldAffectActivity INTEGER

GENERATED ALWAYS AS (

type IS NULL

OR

type NOT IN (

'change-number-notification',

'contact-removed-notification',

'conversation-merge',

'group-v1-migration',

'keychange',

'message-history-unsynced',

'profile-change',

'story',

'universal-timer-notification',

'verified-change'

)

), shouldAffectPreview INTEGER

GENERATED ALWAYS AS (

type IS NULL

OR

type NOT IN (

'change-number-notification',

'contact-removed-notification',

'conversation-merge',

'group-v1-migration',

'keychange',

'message-history-unsynced',

'profile-change',

'story',

'universal-timer-notification',

'verified-change'

)

), isUserInitiatedMessage INTEGER

GENERATED ALWAYS AS (

type IS NULL

OR

type NOT IN (

'change-number-notification',

'contact-removed-notification',

'conversation-merge',

'group-v1-migration',

'group-v2-change',

'keychange',

'message-history-unsynced',

'profile-change',

'story',

'universal-timer-notification',

'verified-change'

)

), mentionsMe INTEGER NOT NULL DEFAULT 0, isGroupLeaveEvent INTEGER

GENERATED ALWAYS AS (

type IS 'group-v2-change' AND

json_array_length(json_extract(json, '$.groupV2Change.details')) IS 1 AND

json_extract(json, '$.groupV2Change.details[0].type') IS 'member-remove' AND

json_extract(json, '$.groupV2Change.from') IS NOT NULL AND

json_extract(json, '$.groupV2Change.from') IS json_extract(json, '$.groupV2Change.details[0].aci')

), isGroupLeaveEventFromOther INTEGER

GENERATED ALWAYS AS (

isGroupLeaveEvent IS 1

AND

isChangeCreatedByUs IS 0

), callId TEXT

GENERATED ALWAYS AS (

json_extract(json, '$.callId')

));

CREATE TABLE sqlite_stat4(tbl,idx,neq,nlt,ndlt,sample);

CREATE TABLE jobs(

id TEXT PRIMARY KEY,

queueType TEXT STRING NOT NULL,

timestamp INTEGER NOT NULL,

data STRING TEXT

);

CREATE TABLE reactions(

conversationId STRING,

emoji STRING,

fromId STRING,

messageReceivedAt INTEGER,

targetAuthorAci STRING,

targetTimestamp INTEGER,

unread INTEGER

, messageId STRING);

CREATE TABLE senderKeys(

id TEXT PRIMARY KEY NOT NULL,

senderId TEXT NOT NULL,

distributionId TEXT NOT NULL,

data BLOB NOT NULL,

lastUpdatedDate NUMBER NOT NULL

);

CREATE TABLE unprocessed(

id STRING PRIMARY KEY ASC,

timestamp INTEGER,

version INTEGER,

attempts INTEGER,

envelope TEXT,

decrypted TEXT,

source TEXT,

serverTimestamp INTEGER,

sourceServiceId STRING

, serverGuid STRING NULL, sourceDevice INTEGER, receivedAtCounter INTEGER, urgent INTEGER, story INTEGER);

CREATE TABLE sendLogPayloads(

id INTEGER PRIMARY KEY ASC,

timestamp INTEGER NOT NULL,

contentHint INTEGER NOT NULL,

proto BLOB NOT NULL

, urgent INTEGER, hasPniSignatureMessage INTEGER DEFAULT 0 NOT NULL);

CREATE TABLE sendLogRecipients(

payloadId INTEGER NOT NULL,

recipientServiceId STRING NOT NULL,

deviceId INTEGER NOT NULL,

PRIMARY KEY (payloadId, recipientServiceId, deviceId),

CONSTRAINT sendLogRecipientsForeignKey

FOREIGN KEY (payloadId)

REFERENCES sendLogPayloads(id)

ON DELETE CASCADE

);

CREATE TABLE sendLogMessageIds(

payloadId INTEGER NOT NULL,

messageId STRING NOT NULL,

PRIMARY KEY (payloadId, messageId),

CONSTRAINT sendLogMessageIdsForeignKey

FOREIGN KEY (payloadId)

REFERENCES sendLogPayloads(id)

ON DELETE CASCADE

);

CREATE TABLE preKeys(

id STRING PRIMARY KEY ASC,

json TEXT

, ourServiceId NUMBER

GENERATED ALWAYS AS (json_extract(json, '$.ourServiceId')));

CREATE TABLE signedPreKeys(

id STRING PRIMARY KEY ASC,

json TEXT

, ourServiceId NUMBER

GENERATED ALWAYS AS (json_extract(json, '$.ourServiceId')));

CREATE TABLE badges(

id TEXT PRIMARY KEY,

category TEXT NOT NULL,

name TEXT NOT NULL,

descriptionTemplate TEXT NOT NULL

);

CREATE TABLE badgeImageFiles(

badgeId TEXT REFERENCES badges(id)

ON DELETE CASCADE

ON UPDATE CASCADE,

'order' INTEGER NOT NULL,

url TEXT NOT NULL,

localPath TEXT,

theme TEXT NOT NULL

);

CREATE TABLE storyReads (

authorId STRING NOT NULL,

conversationId STRING NOT NULL,

storyId STRING NOT NULL,

storyReadDate NUMBER NOT NULL,

PRIMARY KEY (authorId, storyId)

);

CREATE TABLE storyDistributions(

id STRING PRIMARY KEY NOT NULL,

name TEXT,

senderKeyInfoJson STRING

, deletedAtTimestamp INTEGER, allowsReplies INTEGER, isBlockList INTEGER, storageID STRING, storageVersion INTEGER, storageUnknownFields BLOB, storageNeedsSync INTEGER);

CREATE TABLE storyDistributionMembers(

listId STRING NOT NULL REFERENCES storyDistributions(id)

ON DELETE CASCADE

ON UPDATE CASCADE,

serviceId STRING NOT NULL,

PRIMARY KEY (listId, serviceId)

);

CREATE TABLE uninstalled_sticker_packs (

id STRING NOT NULL PRIMARY KEY,

uninstalledAt NUMBER NOT NULL,

storageID STRING,

storageVersion NUMBER,

storageUnknownFields BLOB,

storageNeedsSync INTEGER NOT NULL

);

CREATE TABLE groupCallRingCancellations(

ringId INTEGER PRIMARY KEY,

createdAt INTEGER NOT NULL

);

CREATE TABLE IF NOT EXISTS 'messages_fts_data'(id INTEGER PRIMARY KEY, block BLOB);

CREATE TABLE IF NOT EXISTS 'messages_fts_idx'(segid, term, pgno, PRIMARY KEY(segid, term)) WITHOUT ROWID;

CREATE TABLE IF NOT EXISTS 'messages_fts_content'(id INTEGER PRIMARY KEY, c0);

CREATE TABLE IF NOT EXISTS 'messages_fts_docsize'(id INTEGER PRIMARY KEY, sz BLOB);

CREATE TABLE IF NOT EXISTS 'messages_fts_config'(k PRIMARY KEY, v) WITHOUT ROWID;

CREATE TABLE edited_messages(

messageId STRING REFERENCES messages(id)

ON DELETE CASCADE,

sentAt INTEGER,

readStatus INTEGER

, conversationId STRING);

CREATE TABLE mentions (

messageId REFERENCES messages(id) ON DELETE CASCADE,

mentionAci STRING,

start INTEGER,

length INTEGER

);

CREATE TABLE kyberPreKeys(

id STRING PRIMARY KEY NOT NULL,

json TEXT NOT NULL, ourServiceId NUMBER

GENERATED ALWAYS AS (json_extract(json, '$.ourServiceId')));

CREATE TABLE callsHistory (

callId TEXT PRIMARY KEY,

peerId TEXT NOT NULL, -- conversation id (legacy) uuid groupId roomId

ringerId TEXT DEFAULT NULL, -- ringer uuid

mode TEXT NOT NULL, -- enum "Direct" "Group"

type TEXT NOT NULL, -- enum "Audio" "Video" "Group"

direction TEXT NOT NULL, -- enum "Incoming" "Outgoing

-- Direct: enum "Pending" "Missed" "Accepted" "Deleted"

-- Group: enum "GenericGroupCall" "OutgoingRing" "Ringing" "Joined" "Missed" "Declined" "Accepted" "Deleted"

status TEXT NOT NULL,

timestamp INTEGER NOT NULL,

UNIQUE (callId, peerId) ON CONFLICT FAIL

);

[ dropped all indexes to save space in this blog post ]

CREATE TRIGGER messages_on_view_once_update AFTER UPDATE ON messages

WHEN

new.body IS NOT NULL AND new.isViewOnce = 1

BEGIN

DELETE FROM messages_fts WHERE rowid = old.rowid;

END;

CREATE TRIGGER messages_on_insert AFTER INSERT ON messages

WHEN new.isViewOnce IS NOT 1 AND new.storyId IS NULL

BEGIN

INSERT INTO messages_fts

(rowid, body)

VALUES

(new.rowid, new.body);

END;

CREATE TRIGGER messages_on_delete AFTER DELETE ON messages BEGIN

DELETE FROM messages_fts WHERE rowid = old.rowid;

DELETE FROM sendLogPayloads WHERE id IN (

SELECT payloadId FROM sendLogMessageIds

WHERE messageId = old.id

);

DELETE FROM reactions WHERE rowid IN (

SELECT rowid FROM reactions

WHERE messageId = old.id

);

DELETE FROM storyReads WHERE storyId = old.storyId;

END;

CREATE VIRTUAL TABLE messages_fts USING fts5(

body,

tokenize = 'signal_tokenizer'

);

CREATE TRIGGER messages_on_update AFTER UPDATE ON messages

WHEN

(new.body IS NULL OR old.body IS NOT new.body) AND

new.isViewOnce IS NOT 1 AND new.storyId IS NULL

BEGIN

DELETE FROM messages_fts WHERE rowid = old.rowid;

INSERT INTO messages_fts

(rowid, body)

VALUES

(new.rowid, new.body);

END;

CREATE TRIGGER messages_on_insert_insert_mentions AFTER INSERT ON messages

BEGIN

INSERT INTO mentions (messageId, mentionAci, start, length)

SELECT messages.id, bodyRanges.value ->> 'mentionAci' as mentionAci,

bodyRanges.value ->> 'start' as start,

bodyRanges.value ->> 'length' as length

FROM messages, json_each(messages.json ->> 'bodyRanges') as bodyRanges

WHERE bodyRanges.value ->> 'mentionAci' IS NOT NULL

AND messages.id = new.id;

END;

CREATE TRIGGER messages_on_update_update_mentions AFTER UPDATE ON messages

BEGIN

DELETE FROM mentions WHERE messageId = new.id;

INSERT INTO mentions (messageId, mentionAci, start, length)

SELECT messages.id, bodyRanges.value ->> 'mentionAci' as mentionAci,

bodyRanges.value ->> 'start' as start,

bodyRanges.value ->> 'length' as length

FROM messages, json_each(messages.json ->> 'bodyRanges') as bodyRanges

WHERE bodyRanges.value ->> 'mentionAci' IS NOT NULL

AND messages.id = new.id;

END;

sqlite>

Finally I have the tool needed to inspect and process Signal

messages that I need, without using the vendor provided client. Now

on to transforming it to a more useful format.

As usual, if you use Bitcoin and want to show your support of my

activities, please send Bitcoin donations to my address

15oWEoG9dUPovwmUL9KWAnYRtNJEkP1u1b.

The DRM/KMS framework provides the atomic API for color management through KMS

properties represented by

The DRM/KMS framework provides the atomic API for color management through KMS

properties represented by struct drm_property. We extended the color

management interface exposed to userspace by leveraging existing resources and

connecting them with driver-specific functions for managing modeset properties.

On the AMD DC layer, the interface with hardware color blocks is established.

The AMD DC layer contains OS-agnostic components that are shared across

different platforms, making it an invaluable resource. This layer already

implements hardware programming and resource management, simplifying the external

developer s task. While examining the DC code, we gain insights into the color

pipeline and capabilities, even without direct access to specifications.

Additionally, AMD developers provide essential support by answering queries and

reviewing our work upstream.

The primary challenge involved identifying and understanding relevant AMD DC

code to configure each color block in the color pipeline. However, the ultimate

goal was to bridge the DC color capabilities with the DRM API. For this, we

changed the AMD DM, the OS-dependent layer connecting the

DC interface to the DRM/KMS framework. We defined and managed driver-specific

color properties, facilitated the transport of user space data to the DC, and

translated DRM features and settings to the DC interface. Considerations were

also made for differences in the color pipeline based on hardware capabilities.

AMD s display driver supports the following pre-defined transfer functions (aka named fixed curves):

- OETF: the opto-electronic transfer function, which converts linear scene light into the video signal, typically within a camera.

- EOTF: electro-optical transfer function, which converts the video signal into the linear light output of the display.

- OOTF: opto-optical transfer function, which has the role of applying the rendering intent .

These capabilities vary depending on the hardware block, with some utilizing hardcoded curves and others relying on AMD s color module to construct curves from standardized coefficients. It also supports user/custom curves built from a lookup table.

- Linear/Unity: linear/identity relationship between pixel value and luminance value;

- Gamma 2.2, Gamma 2.4, Gamma 2.6: pure power functions;

- sRGB: 2.4: The piece-wise transfer function from IEC 61966-2-1:1999;

- BT.709: has a linear segment in the bottom part and then a power function with a 0.45 (~1/2.22) gamma for the rest of the range; standardized by ITU-R BT.709-6;

- PQ (Perceptual Quantizer): used for HDR display, allows luminance range capability of 0 to 10,000 nits; standardized by SMPTE ST 2084.

In the AMD Display Core Next hardware pipeline, we encounter two hardware

blocks with color capabilities: the Display Pipe and Plane (DPP) and the

Multiple Pipe/Plane Combined (MPC). The DPP handles color adjustments per plane

before blending, while the MPC engages in post-blending color adjustments.

In short, we expect DPP color capabilities to match up with DRM plane

properties, and MPC color capabilities to play nice with DRM CRTC properties.

Note: here s the catch there are some DRM CRTC color transformations that

don t have a corresponding AMD MPC color block, and vice versa. It s like a

puzzle, and we re here to solve it!

In the AMD Display Core Next hardware pipeline, we encounter two hardware

blocks with color capabilities: the Display Pipe and Plane (DPP) and the

Multiple Pipe/Plane Combined (MPC). The DPP handles color adjustments per plane

before blending, while the MPC engages in post-blending color adjustments.

In short, we expect DPP color capabilities to match up with DRM plane

properties, and MPC color capabilities to play nice with DRM CRTC properties.

Note: here s the catch there are some DRM CRTC color transformations that

don t have a corresponding AMD MPC color block, and vice versa. It s like a

puzzle, and we re here to solve it!

struct

dpp_color_caps

and struct

mpc_color_caps.

The AMD Steam Deck hardware provides a tangible example of these capabilities.

Therefore, we take SteamDeck/DCN301 driver as an example and look at the Color

pipeline capabilities described in the file:

driver/gpu/drm/amd/display/dcn301/dcn301_resources.c

/* Color pipeline capabilities */

dc->caps.color.dpp.dcn_arch = 1; // If it is a Display Core Next (DCN): yes. Zero means DCE.

dc->caps.color.dpp.input_lut_shared = 0;

dc->caps.color.dpp.icsc = 1; // Intput Color Space Conversion (CSC) matrix.

dc->caps.color.dpp.dgam_ram = 0; // The old degamma block for degamma curve (hardcoded and LUT). Gamma correction is the new one.

dc->caps.color.dpp.dgam_rom_caps.srgb = 1; // sRGB hardcoded curve support

dc->caps.color.dpp.dgam_rom_caps.bt2020 = 1; // BT2020 hardcoded curve support (seems not actually in use)

dc->caps.color.dpp.dgam_rom_caps.gamma2_2 = 1; // Gamma 2.2 hardcoded curve support

dc->caps.color.dpp.dgam_rom_caps.pq = 1; // PQ hardcoded curve support

dc->caps.color.dpp.dgam_rom_caps.hlg = 1; // HLG hardcoded curve support